Best Tools for Context Engineering in Agentic AI Systems

Blog Editor, Neo4j

12 min read

Context engineering selects, shapes, and delivers the information and tools an agent needs the moment they’re needed. Agentic AI systems depend on this work because multi-step plans only hold together when facts, constraints, and states stay consistent from one action to the next.

Bad context shows up quickly in practice. An agent might pull outdated pricing data (hallucination), include irrelevant documents (token inefficiency), or lose track of prior tool outputs mid-workflow (context rot). In a real system, that could mean issuing the wrong refund, violating compliance rules, or triggering incorrect downstream actions.

This guide covers the key context engineering layers and tools you’ll need to avoid these failures, including orchestration, memory, and tool integration. It also covers techniques for keeping context lean and evaluating quality.

More in this guide:

The Orchestration Layer for Agent Workflows

Orchestration is one of the most important layers for agentic systems; it determines what context agents see. An orchestration framework coordinates agents and keeps workflows on track by deciding what data to retrieve, which tools to call, what state to carry forward, and what output format to enforce. Some of the most popular open-source orchestration frameworks are LangChain and LlamaIndex.

LangChain

LangChain is a comprehensive ecosystem for building agentic applications, supporting agent workflows and tool integrations. The LangChain framework provides the building blocks for agentic systems, including chains, agents, tools, and memory, so agents can plan, call tools, and complete multi-step tasks.

The ecosystem adds pieces that teams usually end up needing in production:

- LangChain Core provides chains, agents, and tools.

- LangGraph models stateful workflows with branching, error handling, and human-in-the-loop steps.

- LangSmith supports observability, debugging, and evaluation.

- LangServe helps deploy workflows as REST APIs.

- Built-in libraries are for tool integrations, conversation memory, and state management.

LangChain is usually the best fit when you need a comprehensive framework with tool integration, state management, and complex workflows.

LlamaIndex

LlamaIndex is a data-centric framework for LLM applications with strong ingestion, indexing, and retrieval capabilities. It’s built around creating searchable indexes from many data sources and tuning retrieval quality.

Some of LlamaIndex’s strengths include:

- Complex index structures for different retrieval strategies, including vector, tree-based, graph-based, and list-based indexes.

- Data ingestion includes connectors for PDFs, databases, APIs, and other structured formats.

- Data transformation pipelines provide chunking, cleaning, and optimizing workflows.

- Complex query handling through techniques like query routing and automatic decomposition into smaller sub-questions.

- Built-in integration for memory blocks, evaluation tools, and other production features.

LlamaIndex is the best fit for document-heavy applications where retrieval quality is a primary success metric and indexing strategy needs fine-grained control.

The Memory Layer for Grounded Context

Context windows are finite. Sending everything increases latency and cost, and it still doesn’t provide durable memory across sessions. There are many layers of memory in context engineering, including:

- Short-term memory: Conversation history, session state, and the immediate working context for the current task.

- Long-term memory: Persistent factual domain knowledge about entities, relationships, and preferences.

- Reasoning memory: Decision traces, including plans, tool calls, intermediate steps, and evidence used.

Short-Term Memory

Short-term memory stores the information an agent needs immediately: the active conversation, recent tool outputs, intermediate variables, and session state for the current task. It’s what keeps a multi-step workflow coherent from one action to the next.

Most frameworks include short-term memory as part of the agent’s runtime state. For example, LangChain manages short-term memory within the agent state, while LlamaIndex provides similar memory components to preserve the working context during a session.

Short-term memory is useful for maintaining conversational continuity, carrying forward tool results, and preventing the agent from losing track of user intent mid-workflow. Without it, the agent may repeatedly ask for the same information, drop important constraints, or fail to complete a task consistently.

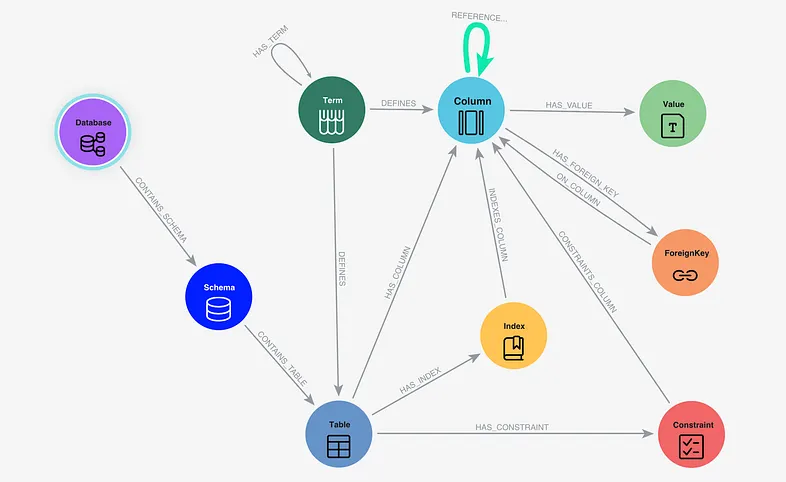

Knowledge Graphs for Long-Term Memory

Long-term memory stores information that persists beyond the current session: domain facts, entity knowledge, user preferences, prior decisions, and other durable context.

In many agentic systems, this memory works best when represented as a knowledge graph of entities and relationships rather than vectors alone. Vectors capture semantic similarity. Knowledge graphs capture meaning and structure. With both, agents can retrieve not just similar text but hop through relationships to discover connected context. This drastically reduces the risks of hallucination.

Neo4j provides the world’s leading knowledge graphs for your long-term memory with built-in vector index capabilities and integration with LangChain. Agents retrieve information from knowledge graphs using GraphRAG by traversing the graph to pull richer, connected facts and their relationships. Alternatively, hybrid retrieval combines GraphRAG and vector RAG to retrieve both semantically similar and contextually relevant information.

There are also many other long-term memory tools built on Neo4j knowledge graphs:

- Zep builds long-term memory for AI assistants using knowledge graphs to connect users, sessions, and facts.

- Graphiti from Zep creates temporal knowledge graphs that track how information evolves over time.

- Cognee uses graphs to build structured memory layers that agents can reason over.

- Mem0 provides personalized AI memory with graph-based entity relationships.

- LangMem from LangChain stores agent experiences as connected memory graphs.

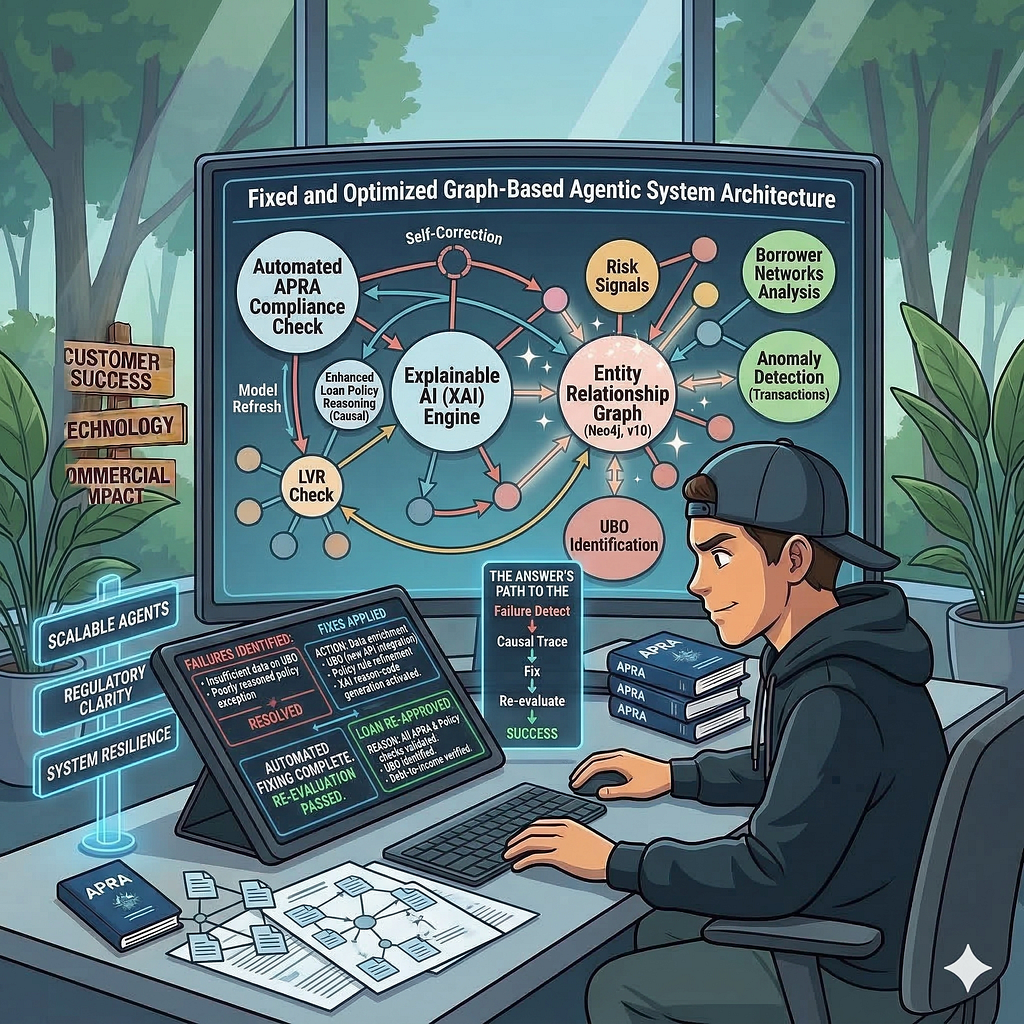

Context Graphs for Reasoning Memory

Reasoning memory captures an agent’s decision traces and how it reached a conclusion: the steps it planned, the tools it called, the evidence it used, and whether the result succeeded.

Decision traces are best stored in a Neo4j graph, specifically a context graph, which makes agentic reasoning and decisioning structured, queryable, and reusable. Instead of recording a single outcome, the context graph links the request, reasoning steps, tool calls, and data sources into a single trace. That makes it possible to follow a decision from start to finish and see exactly what influenced it. This enables agentic systems to make smarter, more contextual decisions rather than simply following rigid rules.

Imagine a renewal agent proposes a 20-percent discount. But the policy only allows up to a 10-percent discount unless an exception is approved. A renewal agent may retrieve recent cases at risk of churn and surface a prior case where a similar exception was approved. It then routes the exception request to finance, which approves it.

The context graph preserves the decision-making process, making it explainable, auditable, and reusable for similar cases.

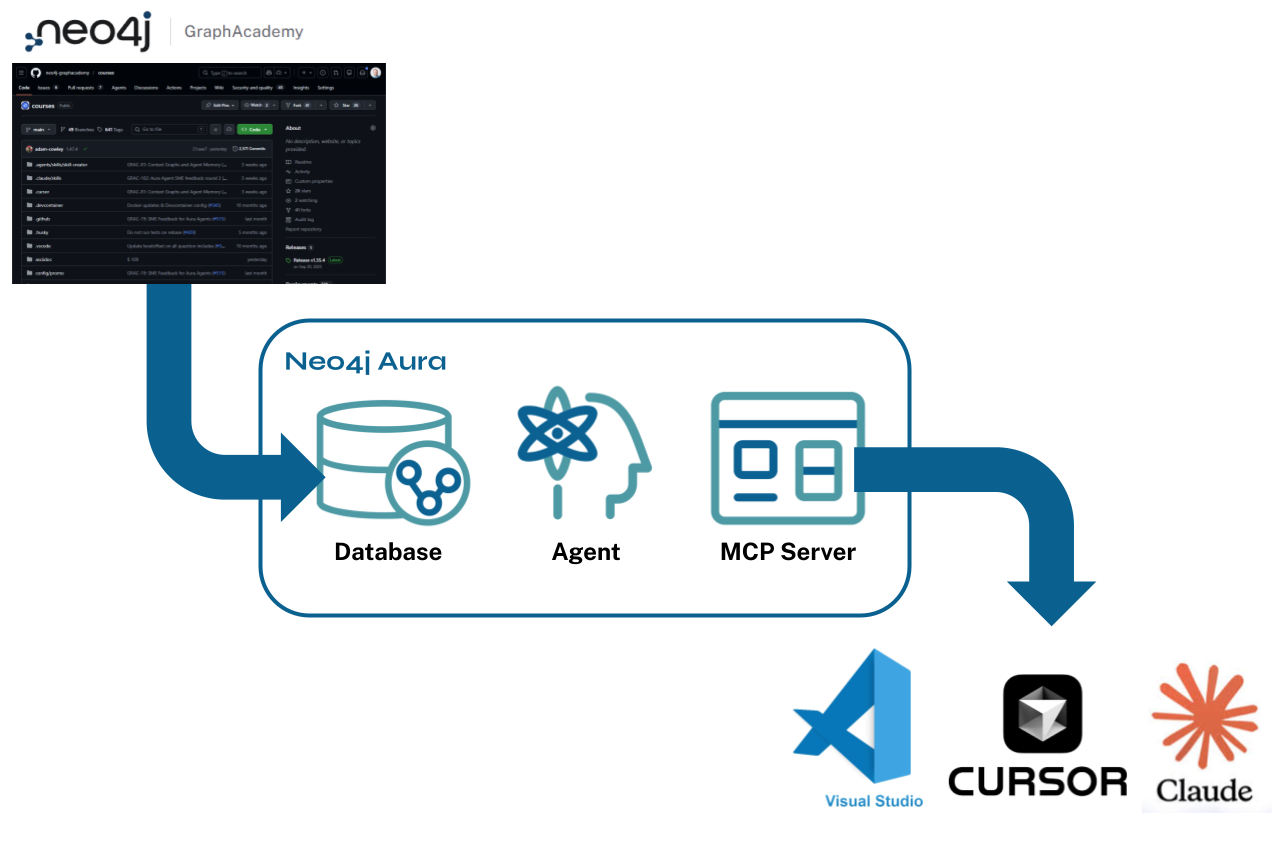

Connect to Tools With Model Context Protocol

Model Context Protocol (MCP) is the standard protocol that helps agents connect to external tools, providing the tool definitions and instructions to reduce ambiguity. Anthropic introduced MCP as a standard and uses a client-server model in which MCP hosts connect to MCP servers via MCP clients. It allows you to consistently pass tools and the necessary context to agents, so they understand the instructions and capabilities and use the tools correctly.

MCP matters for context engineering because agent reliability improves when:

- An agent can “see” what tools exist and what each expects.

- Each tool has clear instructions for inputs and outputs, reducing ambiguous calls.

- Swapping a tool implementation doesn’t require rewriting the whole agent.

MCP aligns with how agentic AI systems work in practice: planning and executing multi-step tool usage while carrying forward context between steps. That makes it a practical foundation for agentic systems that depend on consistent, reliable access to tools.

Neo4j offers MCP integrations for knowledge graphs, including tools, resources, and prompts that allow agentic systems to read and write your graphs.

Make Agent Actions Safe and Verifiable

A model can generate text all day, but agentic systems must act safely when interacting with the external environment. Safeguards must be embedded into tools.

- Function calling: Structured tool calls reduce ambiguity. Predefined functions with explicit inputs and outputs help prevent hallucinated tool use and support predictable, deterministic workflows.

- API calls: Real-time data often decides whether a response is correct. API integration lets an agent pull real-time data and take action based on current information.

- Structured outputs: Schema-constrained formats like JSON keep downstream systems predictable. A structured response is easier to validate and parse than free-form text.

- Agent skills: Reusable skills keep workflows consistent. A skill bundle includes instructions, scripts, and resources so the agent can execute a task consistently under the same conditions.

Context Optimization to Keep Context Lean

Token inefficiency shows up as slower agents, higher cost, and lower relevance. If your context doesn’t fit into the model’s context window, the performance and accuracy deteriorate, leading to context rot. Current models have a performance ceiling at around a million tokens. To fit into this window, context optimization techniques, such as compression or chunking, are used to cut token waste without sacrificing the details the model needs.

Compression

Compression aims to preserve meaning while shrinking token usage.

Summarization is often done through transformers to condense long passages into shorter, meaning-preserving summaries. This can be done using libraries like load_summarize_chain in LangChain or PEGASUS from Google.

Use the following guidelines to reduce token waste without losing key details:

- Summarize or compress before embedding when appropriate.

- Use multi-stage summarization for long corpora.

- Compress long-lived reference content like policies, manuals, and runbooks.

- Avoid compressing short-lived transactional context like the latest ticket update or recent tool output unless the agent repeatedly reuses it.

Chunking

Chunking splits your context into smaller, manageable pieces. Chunking is useful when you intend to convert them into separate embeddings that fit into the context window. This improves recall and reduces hallucination in RAG pipelines. Most agent frameworks, such as LangChain and LlamaIndex, include text-splitting integrations for chunking.

Use these rules when splitting content:

- Chunk by fixed size when sources are unstructured (word count, character count, token size)

- Chunk by structure when sources have structure (headings, sections, tables)

- Chunk by semantics when sources are based on meaning (paragraphs with different meanings)

Context Quality Evaluation

You can’t improve what you can’t measure. Reliable agent behavior requires constrained execution, validated inputs and outputs, and auditable tool usage. Agent behavior changes as prompts, tools, and data evolve, and retrieval can drift, tool calls can fail, and small edits can increase hallucinations or break structured outputs.

Evaluation catches these issues early. The goal is to measure relevance, faithfulness, and safety by checking whether the system retrieved the correct context, followed the appropriate steps, and grounded its output in evidence.

Most frameworks have built-in tools to evaluate the performance of your agentic systems. In the LangChain ecosystem, for example, LangSmith Evaluations runs evaluations on datasets to compare agent versions, benchmark performance, and catch regressions before users do. LlamaIndex has a similar evaluation framework.

Evaluation tools often use LLM-as-judge scoring, which is helpful for quantifying performance metrics, especially with human feedback built into the loop. Evaluation metrics can include factors like accuracy, relevance, observability, and other domain-specific metrics.

How to Choose the Right Tools for Context Engineering

Production agents break in predictable ways when requirements are unclear. When choosing your context engineering tools and frameworks, focus on clear system goals and constraints across memory, orchestration, execution, optimization, and evaluation.

- Define system requirements: Clarify whether the system is a single-turn assistant or a multi-step agent, then set targets for latency, cost, safety, audit needs, and data freshness. Requirements such as real-time data access or strict compliance constraints will shape every tooling choice.

- Choose an orchestration framework: Pick an orchestration framework based on workflow complexity. Tool-driven agents with stateful control flow and many integrations tend to map well to LangChain-style approaches. Retrieval-heavy applications with sophisticated indexing and query pipelines often map well to LlamaIndex-style approaches.

- Choose a memory and retrieval architecture: Match the memory layer to the kind of reasoning the application needs. Graphs work well for complex enterprise knowledge where relationships, provenance, and explainability matter. Knowledge graphs provide agents with a structured understanding of your domain. Context graphs provide agents with decision traces to improve their reasoning capabilities.

- Add execution capabilities: Define how the agent will act safely. MCP helps standardize tool integration with agents. Function calling helps constrain tool use with explicit schemas and predictable inputs and outputs. Structured outputs help downstream systems by producing responses that are easy to validate and parse.

- Optimize context efficiency: Reduce token waste with chunking and compression tuned to your content and query patterns. Effective chunking improves recall while lowering hallucination risk. Compression helps keep prompts small without losing key facts and constraints.

- Evaluate and monitor: Treat evaluation and observability as production requirements. Regression tests, grounding checks, and tracing help catch drift early and explain failures when the system changes.

Build Agentic Systems With Reliable Context

Context engineering is foundational for agentic AI systems because every step depends on the system supplying the right facts, constraints, and state at the right moment. Without it, tool use becomes brittle, retrieval drifts, and quality degrades as the application evolves.

Reliable agentic systems are built on reliable frameworks that orchestrate memory and tool use to maintain consistent context across multi-step reasoning and workflows. That includes selecting the right data at the right time, preserving state between actions, and ensuring every decision is grounded in evidence. It’s also important to keep context lean through compression and chunking, and evaluate for performance and hallucination.

Neo4j provides knowledge graphs and context graphs to make context reliable. By explicitly storing entities and relationships, they preserve the structure of enterprise domain knowledge and enable traceable decisions for reliable agentic AI systems.

Essentials of GraphRAG

Pair a knowledge graph with RAG for accurate, explainable AI. Get the authoritative guide from Manning.