AI & Graph Technology: AI Explainability

Graph Analytics & AI Program Director

3 min read

This week we reach the final blog in our five-part series on AI and graph technology. Our focus here is on how graphs offer a way to provide transparency into the way AI makes decisions. This area is called AI explainability.

One challenge in AI adoption is understanding how an AI solution made a particular decision. The area of AI explainability is still emerging, but there’s considerable research that suggests graphs make AI predictions easier to trace and explain.

This ability is crucial for long-term AI adoption because, in many industries – such as healthcare, credit risk scoring and criminal justice – we must be able to explain how and why decisions are made by AI. This is where graphs may add context for credibility.

There are many examples of machine learning and deep learning providing incorrect answers.

For example, classifiers can make associations that lead to miscategorization, such as classifying a dog as a wolf. It is sometimes a significant challenge to understand what led an AI solution to make that decision.

There are three categories of explainability that relate to the type of questions we’re asking.

Explainable data means that we know what data was used to train our model and why. Unfortunately, this isn’t as straightforward as we might think. If we consider a large cloud service provider or a company like Facebook with an enormous amount of data, it’s difficult to know what exact data was used to inform its algorithms.

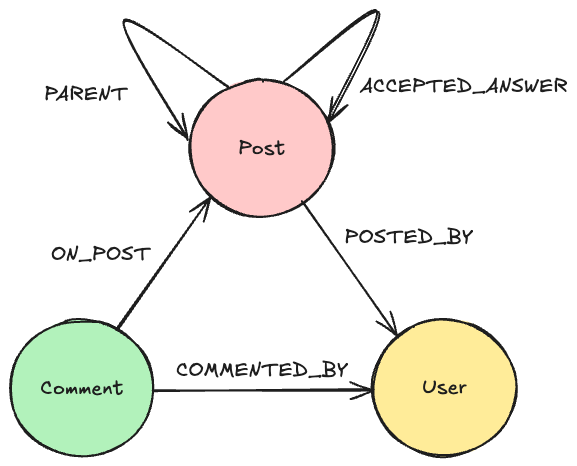

Graphs tackle the explainable data issue fairly easily, using data lineage methods employed by most of the top financial institutions today. This requires storing our data as a graph but provides the ability to track how data is changed, where data is used and who used what data.

Another area with significant potential is research into explainable predictions. This is where we want to know what features and weights were used for a particular prediction. There’s active research into using graphs for more explainable predictions.

For example, if we associate nodes in a neural network to a labeled knowledge graph, when a neural network uses a node, we’ll have insight into all the node’s associated data from the knowledge graph. This allows us to traverse through the activated nodes and infer an explanation from the surrounding data.

Finally, explainable algorithms enable us to understand which individual layers and thresholds lead to a prediction. We are years from solutions in this area, but there is promising research that includes constructing a tensor in a graph with weighted linear relationships. Early signs indicate we may be able to determine explanations and coefficients at each layer.

Conclusion

In this five-part blog series, we’ve considered four ways graphs add context for artificial intelligence: context for decisions with knowledge graphs, context for efficiency with graph accelerated ML, context for accuracy with connected feature extraction, and finally context for credibility with AI explainability.

AI and machine learning hold great potential. Graphs unlock that potential. That’s because graph technology incorporates context and connections that make AI more broadly applicable.

If you’re building an AI solution, you need graph technology to give it contextual power. The Neo4j Graph Platform empowers you to build intelligent applications faster, enabling you to find new markets, delight customers, improve outcomes and address your most pressing business challenges.

Read the white paper, Artificial Intelligence & Graph Technology: Enhancing AI with Context & Connections