APOC 1.1.0 Release: Awesome Procedures on Cypher

Head of Product Innovation & Developer Strategy, Neo4j

8 min read

I’m super thrilled to announce last week’s 1.1.0 release of the Awesome Procedures on Cypher (APOC). A lot of new and cool stuff has been added and some issues have been fixed.

Thanks to everyone who contributed to the procedure collection, especially Stefan Armbruster, Kees Vegter, Florent Biville, Sascha Peukert, Craig Taverner, Chris Willemsen and many more.

And of course my thanks go to everyone who tried APOC and gave feedback, so that we could improve the library.

| If you are new to Neo4j’s procedures and APOC, please start by reading the first article of my introductory blog series. |

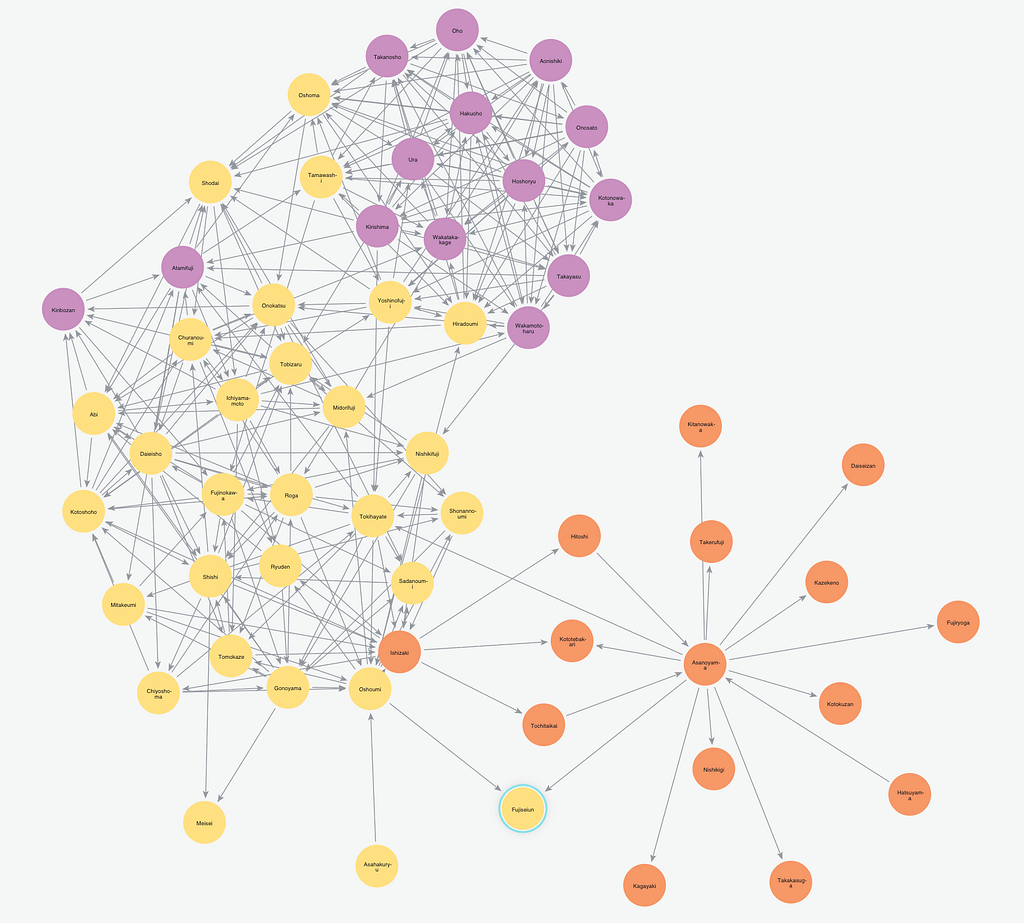

The APOC library was first released as version 1.0 in conjunction with the Neo4j 3.0 release at the end of April with around 90 procedures and was mentioned in Emil’s Neo4j 3.0 release keynote.

In early May we had a 1.0.1 release with a number of new procedures especially around free text search, graph algorithms and geocoding, which was also used by the journalists of the ICIJ for their downloadable Neo4j database of the Panama Papers.

And now, two months later, we’ve reached 200 procedures that are provided by APOC.

These cover a wide range of capabilities, some of which I want to discuss today. In each section of this post I’ll only list a small subset of the new procedures that were added.

If you want get more detailed information, please check out the documentation with examples.

Notable Changes

As the 100 new procedures represent quite a change, I want to highlight the aspects of APOC that got extended or documented with more practical examples.

Metadata

Besides the apoc.meta.graph functionality that was there from the start, additional procedures to return and sample graph metadata have been added. Some, like apoc.meta.stats, access the transactional database statistics to quickly return information about label and relationship-type counts.

There are now also procedures to return and check of types of values and properties.

|

|

examines a sample sub graph to create the meta-graph, default sampleSize is 100 |

|

|

returns the information stored in the transactional database statistics |

|

|

type name of a value ( |

|

|

returns a row if type name matches none if not |

Data Import / Export

The first export procedures output the provided graph data as Cypher statements in the format that neo4j-shell understands and that can also be read with apoc.cypher.runFile.

Indexes and constraints as well as batched sets of CREATE statements for nodes and relationships will be written to the provided file-path.

|

|

exports whole database incl. indexes as cypher statements to the provided file |

|

|

exports given nodes and relationships incl. indexes as cypher statements to the provided file |

|

|

exports nodes and relationships from the cypher statement incl. indexes as cypher statements to the provided file |

Data Integration with Cassandra, MongoDB and RDBMS

Making integration with other databases easier is a big aspiration of APOC.

Being able to directly read and write data from these sources using Cypher statements is very powerful. As Cypher is an expressive data processing language that allows a variety of data filtering, cleansing and conversions and preparing of the original data.

APOC integrates with relational (RDBMS) and other tabular databases like Cassandra using JDBC. Each row returned from a table or statement is provided as a map value to Cypher to be processed.

And for ElasticSearch the same is achieved by using the underlying JSON-HTTP functionality. For MongoDB, we support connecting via their official Java driver.

To avoid listing full database connection strings with usernames and passwords in your procedures, you can configure those in $NEO4J_HOME/conf/neo4j.conf using the apoc.{jdbc,mongodb,es}.<name>.url config parameters, and just pass name as the first parameter in the procedure call.

Here is a part of the Cassandra example from the data integration section of the docs using the Cassandra JDBC Wrapper.

Entry in neo4j.conf

apoc.jdbc.cassandra_songs.url=jdbc:cassandra://localhost:9042/playlist

CALL apoc.load.jdbc('cassandra_songs', 'track_by_artist') YIELD row

MERGE (a:Artist {name: row.artist})

MERGE (g:Genre {name: row.genre})

CREATE (t:Track {id: toString(row.track_id), title: row.track, length: row.track_length_in_seconds})

CREATE (a)-[:PERFORMED]->(t)

CREATE (t)-[:GENRE]->(g);

// Added 63213 labels, created 63213 nodes, set 182413 properties, created 119200 relationships.

For each data source that you want to connect to, just provide the relevant driver in the $NEO4J_HOME/plugins directory as well. It will then automatically picked up by APOC.

Even if you just visualize which kind of graphs are hidden in that data, there is already a big benefit of being able to do that without leaving the comfort of Cypher and the Neo4j Browser.

To render virtual nodes, relationships and graphs, you can use the appropriate procedures from the apoc.create.* package.

Controlled Cypher Execution

While individual Cypher statements can be run easily, more complex executions – like large data updates, background executions or parallel executions – are not yet possible out of the box.

These kind of abilities are added by the apoc.periodic. and the apoc.cypher. packages. Especially apoc.peridoc.iterate and apoc.periodic.commit are useful for batched updates.

Procedures like apoc.cypher.runMany allow execution of semicolon-separated statements and apoc.cypher.mapParallel allows parallel execution of partial or whole Cypher statements driven by a collection of values.

|

|

runs each statement in the file, all semicolon separated – currently no schema operations |

|

|

runs each semicolon separated statement and returns summary – currently no schema operations |

|

|

executes fragment in parallel batches with the list segments being assigned to _ |

|

|

repeats an batch update statement until it returns 0, this procedure is blocking |

|

|

submit a repeatedly-called background statement until it returns 0 |

|

|

run the second statement for each item returned by the first statement. Returns number of batches and total processed rows |

Schema / Indexing

Besides the manual index update and query support that was already there in the APOC release 1.0, more manual index management operations have been added.

|

|

lists all manual indexes |

|

|

removes manual indexes |

|

|

gets or creates manual node index |

|

|

gets or creates manual relationship index |

There is pretty neat support for free text search that is also detailed with examples in the documentation. It allows you, with apoc.index.addAllNodes, to add a number of properties of nodes with certain labels to a free text search index which is then easily searchable with apoc.index.search.

|

|

add all nodes to this full text index with the given proeprties, additionally populates a ‘search’ index |

|

|

search for the first 100 nodes in the given full text index matching the given lucene query returned by relevance |

Collection & Map Functions

While Cypher has already great support for handling maps and collections, there are always some capabilities that are not possible yet. That’s where APOC’s map and collection functions come in. You can now dynamically create, clean and update maps.

|

|

creates map from list with key-value pairs |

|

|

creates map from a keys and a values list |

|

|

creates map from alternating keys and values in a list |

|

|

returns the map with the value for this key added or replaced |

|

|

removes the keys and values (e.g. null-placeholders) contained in those lists, good for data cleaning from CSV/JSON |

There are means to convert and split collections to other shapes and much more.

|

|

partitions a list into sublists of |

|

|

all values in a list |

|

|

returns `[first,second],[second,third], … |

|

|

returns a unique list backed by a set |

|

|

splits collection on given values rows of lists, value itself will not be part of resulting lists |

|

|

position of value in the list |

You can UNION, SUBTRACT and INTERSECTION collections and much more.

|

|

creates the distinct union of the 2 lists |

|

|

returns the unique intersection of the two lists |

|

|

returns the disjunct set of the two lists |

Graph Representation

There are a number of operations on a graph that return a subgraph of nodes and relationships. With the apoc.graph.* operations you can create such a named graph representation from a number of sources.

|

|

creates a virtual graph object for later processing it tries its best to extract the graph information from the data you pass in |

|

|

creates a virtual graph object for later processing |

|

|

creates a virtual graph object for later processing |

|

|

creates a virtual graph object for later processing |

The idea is that on top of this graph representation other operations (like export or updates), but also graph algorithms, can be executed. The general structure of this representation is:

{

name:"Graph name",

nodes:[node1,node2],

relationships: [rel1,rel2],

properties:{key:"value1",key2:42}

}

Plans for the Future

Of course, it doesn’t stop here. As outlined in the readme, there are many ideas for future development of APOC.

One area to be expanded are graph algorithms and the quality and performance of their implementation. We also want to support import and export capabilities, for instance for graphml and binary formats.

Something that in the future should be more widely supported by APOC procedures is to work with a subgraph representation of a named set of nodes, relationships and properties.

Conclusion

There is a lot more to explore, just take a moment and have a look at the wide variety of procedures listed in the readme.

Going forward I want to achieve a more regular release cycle of APOC. Every two weeks there should be a new release so that everyone benefits from bug fixes and new features.

Now, please:

- Try out the new functionality,

- Check out the growing APOC documentation,

- Also provide feedback / report issues / suggest additions on the GitHub issue tracker

- or the #apoc Slack channel on the public Neo4j-Users Slack.

Cheers,

Catch up with the rest of the Introduction to APOC blog series: