Evolving Your Application of GDS Technology

8 min read

Today, graph data science (GDS) is usually applied in business with one or more major aims in mind: better decisions, increased quality of predictions, and creating new ways to innovate and learn. These goals are increasingly tied to tangible benefits, such as reduced financial loss, faster time to results, increased customer satisfaction, and predictive lift. You may be trying to improve or automate decision-making by people and domain experts that need additional context. Or perhaps your goal is to improve predictive accuracy by using relationships and network structure in analytics and machine learning (ML).

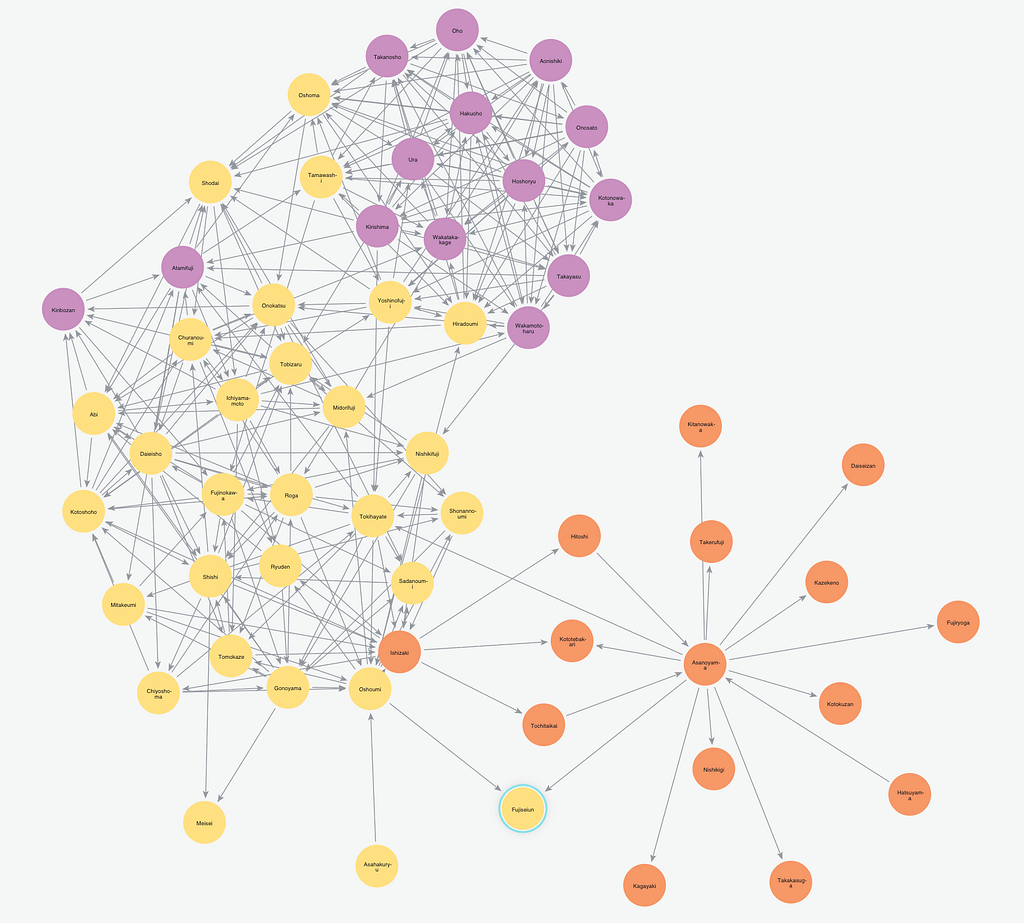

Graphs provide a unique structure for learning that helps evolve ML techniques through better abstraction and interpretability. These business goals strongly map to how organizations integrate graph technology into their data science practices. Figure 3-1 diagrams the major phases of a typical GDS journey. We cover each of these phases in this chapter. The first three phases of the GDS journey are most prevalent in the commercial world today, and the last two are emerging phases on your GDS journey.

Figure 3-1: The GDS journey.

Your organization can use practical steps to gain immediate value and then layer more sophisticated techniques in a way that continually increases your return on effort.

Knowledge Graphs

Knowledge graphs are the foundation of GDS and offer a way to streamline workflows, automate responses, and scale intelligent decisions. At a high level, knowledge graphs are interlinked sets of data points and describe real-world entities, facts, or things and their relationship with each other in a human-understandable form. Unlike a simple knowledge base with flat structures and static content, a knowledge graph acquires and integrates adjacent information by using data relationships to derive new knowledge.

As the first phase in GDS, knowledge graphs are often implemented to bring together diverse information to help domain experts find related content as well as explore the connections in their data. Knowledge graphs can also add context to applications, such as those in artificial intelligence (AI) systems, so they can make better and faster approximating decisions. This approach is used in AI systems, such as chatbots, that use a knowledge graph, for example, to better route a request for a “bat for my husband’s birthday.” In this case, the graph grasps that the request isn’t most likely a flying mammal someone is looking for but instead sporting goods of higher quality for a special occasion. The chatbot can also take into account what’s in-stock, shipping times, and specialty products combining the context of not only the requestor but also of supply and other logistics.

Graph Analytics

After implementing a knowledge graph (see the preceding section), businesses often start using graph analytics to understand their networks better and answer specific questions based on relationships and topology. You’re often trying to infer meaning based on the network structure: finding clusters, identifying influential nodes, evaluating different pathways. Graph analytics usually refers to the use of global queries and algorithms that look at entire graphs for offline analysis of historical data. This process is in contrast to small, real-time transactions and local queries that focus on small areas around a few nodes.

Graph queries are used when you know exactly what you’re looking for, such as asking a question like “How many relationships does Mia have?” or “How many fraudsters or flagged accounts are four hops away?” (A hop is a level or a layer of relationship.) These kinds of queries seem simple because we can imagine standing up and looking at things that are close to us. However, solutions that don’t store relationships alongside their data must perform extra processes to look up and join this related information. Graphs store relationships together with data so following the path of relationships is simple and fast. Native graph databases are particularly good at multiple hop queries because they avoid expensive index lookups and data joins by storing and processing related information adjacently and treating relationships as first class citizens.

Graph algorithms are a subset of data science algorithms that originated from network science to enable reasoning about structure in a more unsupervised fashion. They’re used when you know the pattern or indicator you’re looking for but not exactly what you’ll find. For example, you may be looking for unusually tight communities where nodes have more relationships between each other than you’d expect in a random or normal distribution. To find these communities, you could use the graph algorithm called Louvain Modularity to uncover clusters with higher interaction densities inside, among group members when compared to interactions outside of the group.

The graph algorithms most prevalent in commercial applications fall into roughly six categories:

-

- Pathfinding and search: These algorithms are foundational to graph analytics and explore paths between nodes. They evaluate routes for uses such as physical logistics and least-cost call or Internet protocol (IP) routing.

- Centrality (importance): Centrality algorithms help you uncover the roles of individual nodes and their impact. They identify influential nodes based on their position in the network. These algorithms infer group dynamics, such as credibility, rippling vulnerability, and bridges between groups.

- Community detection: These algorithms find communities where members have more significant interactions. These connections reveal tight clusters, isolated groups, and structures. This information helps predict similar behavior or preferences, estimate resilience, find duplicate entities, or simply prepare data for other analyses.

- Similarity: These algorithms employ set comparisons to look at how alike individual nodes are. The properties and attributes of nodes are used to score the likeness between nodes. This approach is used in applications such as personalized recommendations as well as developing categorical hierarchies.

- Heuristic link prediction: These algorithms consider the proximity of nodes in the network as well as structural elements, such as possible triangles between nodes, to estimate the likelihood of a new relationship forming or that undocumented connections exist. This class of algorithms has many applications from drug repurposing to criminal investigations.

- Graph embedding: These algorithms translate the topology and attributes of a graph into a unique numerical representation that can be used for feature engineering (see the next section “Graph Feature Engineering” for more info), similarity calculations, or visualizations. Unlike traditional graph algorithms that use pre-calculated formulas, embeddings learn the representation from your graph based on neural network models (deep learning) or linear algebra.

In graph analytics, you’re either asking a targeted question or looking at the graph as a whole to infer meaning or make predictions about future behavior.

Graph Feature Engineering

Graph feature engineering is the process of finding, combining, and extracting predictive elements from raw graph data to be used in ML tasks. More information generally makes ML models more accurate, but data scientists rarely have as much data as they’d like. Because relationships are extremely predictive of behavior and they inherently exist inside current data, you can employ graph feature engineering to improve predictions and increase ML model accuracy – with the data you already have.

Graph feature engineering uses relationships and network structures to create new, more meaningful features. It’s the next step to apply what you learn from graph analytics to ML. For example, you could score nodes based on a query that computes how many fraudsters are four hops out, or a centrality algorithm to measure importance. You could also label nodes based on their community ID. (The community ID is assigned by the community detection algorithm.) These scores and labels can then be extracted to a list or table of numbers and identifiers (also called a feature vector) for training ML models. The graph features and resulting ML metrics are often written back to the graph database for persistence and future use.

Figure 3-2 shows how the use of graph features to enhance ML is part of a larger workflow with some example technologies for illustration.

For graph-enhanced ML, you would typically aggregate, explore, and cleanse data and then use graph queries or algorithms for feature engineering. Then you’d prepare the data for ML and split it into training and testing datasets. Although this process isn’t completely linear, after you’ve trained a model and are happy with the results, the model can then be used in production. Although the model may feed a real-time transaction in production, such as approving credit applications online, the graph feature engineering and ML are done offline and periodically updated in a cyclical process. Graph feature engineering offers organizations attainable model improvements without needing to change their ML pipelines.

Figure 3-2: Graph feature engineering is part of a larger ML workflow.

Graph Embedding

Graph embedding simplifies graphs or parts of graphs into a feature vector, or set of vectors, that are in a lower dimensional form, such as a list of numbers. The goal is to create easily consumable data for tasks like ML that still describe more intricate topology, connectivity, or nodes attributes. For example, you can represent an entire graph or a path as an embedding and then learn based on the graph or paths themselves. There are three types of graph embeddings:

-

- Node embeddings describe connectivity of each node.

- Path embeddings encompass the traversals across a graph.

- Graph embeddings encode an entire graph into a single vector.

Graph embedding is often used for more advanced feature engineering that incorporates more complex information, which is why this phase typically comes later in the GDS journey. Embeddings can also be useful for data exploration, computing similarity between entities, and reducing dimensionality to aid in statistical analysis. Graph embedding offers the ability to more widely use the rich structures that make up graphs in various data science tasks and learn based on nuanced information.

Graph Networks

Graph networks are an exciting new approach to ML that may drastically improve results with less data, make predictions more explainable, and lead to new types of learning itself. Graph network and graph native learning are terms coined by Peter Battaglia and a group of researchers. They concluded that using graphs for ML was the next major advancement in ML itself because of the graph’s ability to abstract topology. Their thinking follows this approach:

- Native graph learning takes a graph as an input, performs learning computations while preserving transient states, and then returns a graph.

- This native graph learning process allows the domain expert to review and validate the learning path that leads to more explainable predictions.

- With this process comes richer and more accurate predictions that use less data and training cycles.

Graph native learning automates the identification of relevant features, learning what’s predictive in your data for your specific problem. Today, the valuable time of data scientists and domain experts is frequently employed to tediously test potentially predictive data and compare results before handcrafting an optimal model. Streamlining the process positively impacts ML results across all applications. We’re excited by early progress and look forward to seeing graph-based ML evolve to be extremely efficient and flexible as well as more accurate and transparent.