Graph algorithms in Neo4j: Connected data & graph analysis

6 min read

Until recently, adopting graph analytics required significant expertise and determination, since tools and integrations were difficult and few knew how to apply graph algorithms to their quandaries and business challenges. It is our goal to help change this.

We are writing this blog series to help organizations better leverage graph analytics so they make new discoveries and develop intelligent solutions faster.

While there are other graph algorithm libraries and solutions, this series focuses on the graph algorithms in the Neo4j platform. However, you’ll find these blogs helpful for understanding more general graph concepts regardless of what graph database you use.

This week we’ll explore why graph algorithms are required to analyze today’s connected data.

Today’s data needs graph algorithms

Connectivity is the single most pervasive characteristic of today’s networks and systems.

From protein interactions to social networks, from communication systems to power grids, and from retail experiences to supply chains – networks with even a modest degree of complexity are not random, which means connections are not evenly distributed nor static.

This is why simple statistical analysis alone fails to sufficiently describe – let alone predict – behaviors within connected systems. Consequently, most big data analytics today do not adequately model the connectedness of real-world systems and have fallen short in extracting value from huge volumes of interrelated data.

As the world becomes increasingly interconnected and systems increasingly complex, it’s imperative that we use technologies built to leverage relationships and their dynamic characteristics.

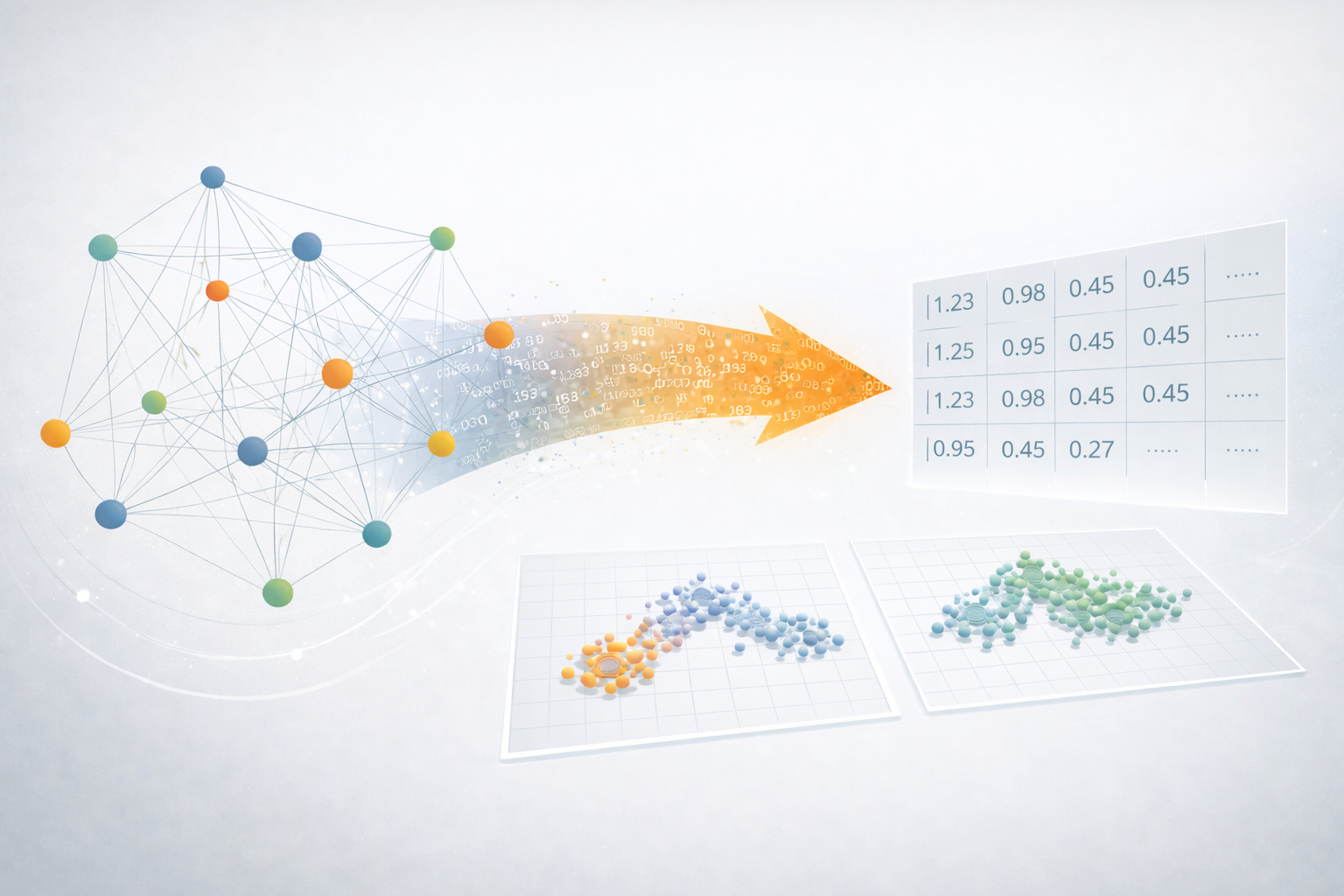

Not surprisingly, interest in graph analytics has exploded because it was explicitly developed to gain insights from connected data. Graph analytics reveal the workings of intricate systems and networks at massive scales – not only for large labs but for any organization. Graph algorithms are processes used to run calculations based on mathematics specifically created for connected information.

Making sense of connected data

There are four to five “Vs” often used to help define big data (volume, velocity, variety, veracity and sometimes value) and yet there’s almost always one powerful “V” missing: valence.

In chemistry, valence is the combining power of an element; in psychology, it is the intrinsic attractiveness of an object; and in linguistics, it’s the number of elements a word combines.

Although valence has a specific meaning in certain disciplines, in almost all cases there is an element of connection and behavior within a larger system. In the context of big data, valence is the tendency of individual data to connect as well as the overall connectedness of datasets.

Some researchers measure the valence of a data collection as the ratio of connections to the total number of possible connections. The more connections within your dataset, the higher its valence.

Your data wants to connect, to form new data aggregations and subsets, and then connect to more data and so forth. Moreover, data doesn’t arbitrarily connect for its own sake; there’s significance behind every connection it makes. In turn, this means that the meaning behind every connection is decipherable after the fact.

Although this may sound like something that’s mainly applicable in a biological context, most complex systems exhibit this tendency. In fact, we can see this in our daily lives with a simple example of highly targeted purchase recommendations based on the connections between our browsing history, shopping habits, demographics, and even current location. Big data has valence – and it’s strong.

Scientists have observed the growth of networks and the relationships within them for some time. Yet there is still much to understand and active work underway to further quantify and uncover the dynamics behind this growth.

What we do know is that valence increases over time but not uniformly. Scientists have described preferential attachment (for example, the rich get richer) as leading to power-law distributions and scale-free networks with hub-and-spoke structures.

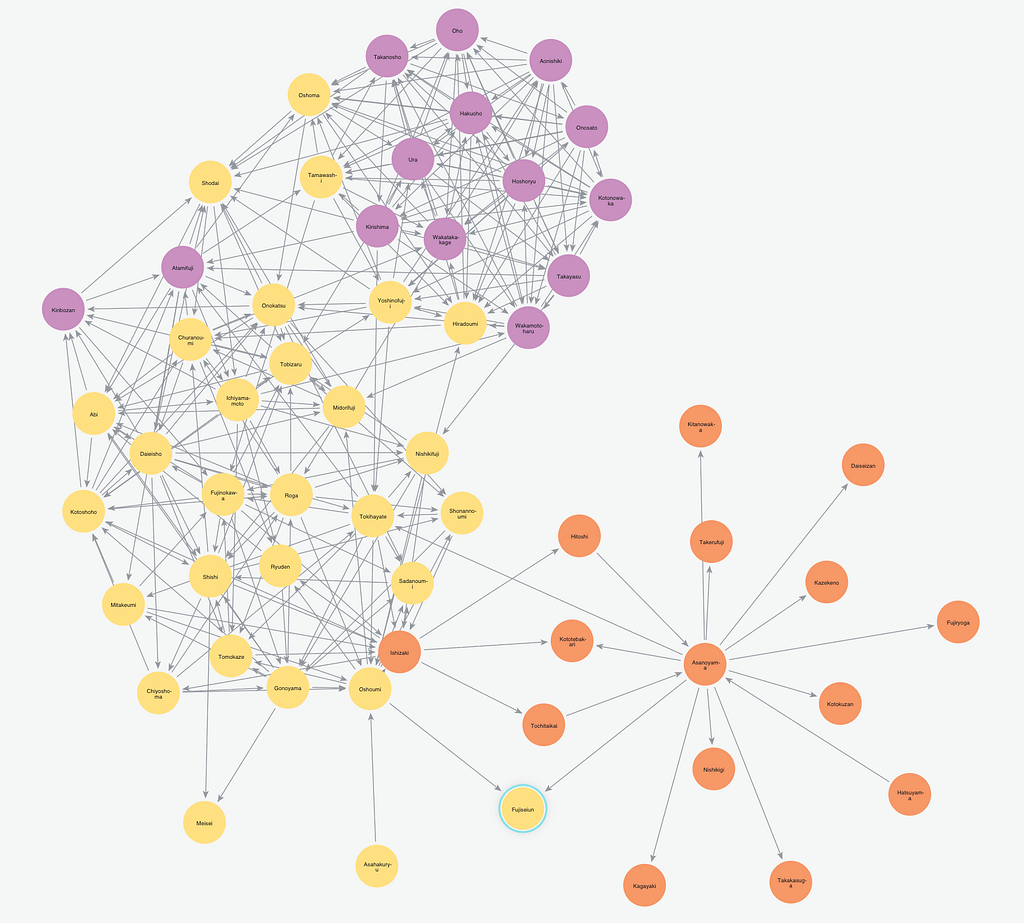

Highly dense and lumpy data networks tend to develop, in effect growing both your big data and its complexity. This is significant because densely yet unevenly connected data is very difficult to unpack and explore with traditional analytics.

In addition, more sophisticated methods are required to model scenarios that make predictions about a network’s evolution over time such as how transportation systems grow. These dynamics further complicate monitoring for sudden changes and bursts, as well as discovering emergent properties.

For example, as density increases in a social group, you might see accelerated communication that then leads to a tipping point of coordination and a subsequent coalition or, alternatively, subgroup formation and polarization.

This data-begets-data cycle may sound intimidating, but the emergent behavior and patterns of these connections reveal more about dynamics than you learn by studying individual elements themselves.

For example, you could study the movements of a single starling but until you understood how these birds interact with each other in a larger group, you wouldn’t understand the dynamics of a flock of starlings in flight.

In business you might be able to make an accurate restaurant recommendation for an individual, but it’s a significant challenge to estimate the best group activity for seven friends with different dietary preferences and relationship statuses. Ironically, it’s this vigorous connectedness that uncovers the hidden value within your data.

Economist Jeffrey Goldstein defined emergence as “the arising of novel and coherent structures, patterns and properties during the process of self-organization in complex systems.” That includes the common characteristics of:

- Radical novelty (features not previously observed in systems);

- Coherence or correlation (meaning integrated wholes that maintain themselves over some period of time);

- A global or macro “level” (i.e., there is some property of “wholeness”);

- Being the product of a dynamical process (it evolves); and

- An ostensive nature (it can be perceived). (Source: Wikipedia)

Conclusion

For today’s connected data, it’s a mistake to scrutinize data elements and aggregations for insights using only simple statistical tools because they make data look uniform and they hide evolving dynamics. Relationships between data are the linchpin of understanding real-world behaviors within – and of – networks and system.

In the coming weeks, we’ll explore the power of graph algorithms and the way that they reveal the dynamics of your ever-changing connected data, empowering you to understand your data in new ways and uncover patterns that are undiscoverable using traditional analytics approaches.

Learn about the power of graph algorithms in the O’Reilly book,

Graph Algorithms: Practical Examples in Apache Spark and Neo4j by the authors of this article. Click below to get your free ebook copy.