Impossible Is Nothing: The History (& Future) of Graph Data [GraphConnect Recap]

Chief Scientist, Neo4j

10 min read

Editor’s Note: Last May at GraphConnect Europe, Jim Webber – Chief Scientist at Neo Technology – gave this inspiring (and nerdy) keynote talk on the history and future of graph data.

Register for GraphConnect San Francisco to hear more speakers like Jim present on the emerging world of graph database technologies.

There’s a lot of ambition in the graph community.

As graph enthusiasts, not only are we currently building some rather ambitious systems, but our ambition seems to be growing, and as the tech grows, our ambition grows, and as our ambition grows, the tech grows all the more.

I want to take a look at where we’ve been in terms of building graph-based systems and where we’re going in the future, with an eye on Neo4j.

I’ve broken this talk down into three distinctly nerdy phases, so, a Star Wars theme. I’ve split these helpfully into episodes four, five and six:

A New Hope:

We’re going to talk about how graph databases have revolutionized data in the relatively short time that graph databases have been around. I think they’ve had a profound impact in the way that we deal with data in systems.

The Empire Strikes Back:

I want to look slightly forward and say given the great ways it’s given us to revolutionize systems, what might we do in the near future to match our ever-rising ambition.

The Return of the Jedi:

Finally, what might we do around graph databases in the very long term?

Let’s dive in.

Episode IV: The History of Graph Databases

Back in the day, in the Star Wars era, the world was a bit different from what we know now.

The world was square, like we all had the same stuff going on. The data about us was quite square.

We had, for example, the same payroll data; it’s kind of tabular or square. We had the same social welfare provision data. (At least we had social welfare provision back then but we may or may not have tomorrow. Let’s see, eh?)

The point being that the world was square and the predominant metaphor that we used to capture data was tables.

Ultimately, the kind of databases that we saw emerging were tabular databases based on the research of E.F. Codd. So back in the ’70s is the genesis of the modern database as we know it – the genesis of the modern data movement actually.

Then in the ’80s that research became mainstream so we had the emergence of the world’s dominant relational database organizations, companies like Oracle really started to push relational technology. At that point, we still had tabular data, so it kind of worked.

Later on, you find that as our ambition grew, so did the complexity of the models that we tried to build in the relational database.

The problem is you’ve got schemas like this one below and some of you can see the intent of this schema. (Don’t worry. It isn’t yours, it just looks like it.) It’s actually the Drupal schema.

You can kind of see through the schema to the underlying intent. Without a word of jest, that this model is super impressive, but it’s got a lot of accidental complexity.

These kinds of complex schemas are difficult to read, they’re difficult to transfer knowledge with and they also eventually imply pain. Because no matter how good your relational database design, at some point you have to join tables. The first join you do, you might get away with it. And the second join you do, you might get away with again. Then, all bets are off.

Eventually the systems become awful and shitty, because we’ve pushed hard at the boundaries of that envelope. It’s difficult to fit semi-structured, irregular modern data into relational tables, no matter how hard you try.

You know this because in your database code shit turns up if NULL checks. I know that without seeing your code because it’s just what happens.

Why? Accidental complexity.

Eventually this complexity snowballs out of control and you get a massive JOIN bomb waiting to go off.

Graph Databases Today

Fast forward to the 1990s. Tim Berners-Lee considered what it meant to have massively scaled data across the world, like the World Wide Web and what it meant for that data to be interlinked. He effectively thought about what it meant to have the first massive set of graph data.

Enter the 21st Century, and we’ve got new vocabulary. Not only do we have the web, but we start to get other interesting data technologies from the leading web companies.

We learned words like ‘web scale’, ‘Google scale’ and so on and this is great because we’re starting to deal with larger volumes of data.

We started to observe that the model of the traditional relational database isn’t terribly helpful for the kind of systems we were building. In fact, the problem that comes when you join through those models is actually an inhibitor to getting business done.

So industry leaders actually started to build new kinds of databases.

They said, ‘Actually, there isn’t a one-size-fits-all database. There are data storage, query and processing needs that are potentially unique to your situation. You should be able to pick the appropriate tech to deal with them.’ Thus, NoSQL.

NoSQL Charges to the Rescue

The majority of the NoSQL databases are aggregate store databases. That is, they store values, documents or columns by key, and these systems work really well where the storage and retrieval patterns are symmetric. Store a customer, retrieve a customer; store accounts, retrieve accounts.

But when you want to slice and dice that data, these kind of systems end up asking you to compute an answer so you export some records out of the database, you run them through some processing infrastructure and you compute your way to an answer. That’s why aggregate stores talk so much about MapReduce.

Instead, you could just use something like a graph database, where your relationships are first-class entities.

But maybe you’ve got a bad feeling about this.

Most people still want to use aggregate stores because it’s what they know. But what if you wanted to sell things to your social network? Sure, you could probably have the gumption to figure out a kind of FlockDB-style shallow graph over your aggregate store and try and keep it somewhat in check.

But now what if you wanted to do something more interesting, like make social recommendations? What then if we wanted to go and do more sophisticated recommendations? What if I wanted to recommend what my friends buy, or what my friends-of-friends buy?

Doing this is an aggregate database is a tough job because you end up processing and computing across so many records. Whereas in Neo4j, you find the record that represents you, traverse out to your friends and traverse out to what they bought. Done deal in sub-milliseconds.

Where Our Past Ambition Has Led Us Today

Today, graph database technology meets or exceeds your ambitions, but that’s just like a red rag to a bull. You’ll just end up saying, ‘I’m going to be more ambitious.’

This brings us to a really interesting juncture.

On the left-hand side of this fork, we have most of NoSQL, where the model is to denormalize your data into keys, values, documents or columns. While that data model is simple, you have to have a compute-centric, MapReduce-friendly database in order to extract any insights from it.

On the right-hand side, you have graph databases. These are the most expressive data model I’ve ever come across, allowing you to traverse the graph to find your information or goals.

Episode V: The Near Future of Graph Data

Act two: Remember Episode V? It’s the good one, actually. You’ve got a bunch of rebels who’ve inflicted a little bit of embarrassment on a shoddily architected IT construction project. Apparently a port was left open. I don’t know.

So what happened is the rebels, they’ve still got basically no resources at their disposal but they’re a little bit more ambitious.

We might say their obvious goals are to cobble something together that’s:

- Eventually consistent over write consistent

- BASE over ACID

- Partitioned graph over single image

Seem’s like the obvious choice, right? Wait just a minute.

We know that from Fischer, Lynch and Paterson that if you have computer network you can pick two of these three characteristics, but never three of these three.

- You can have safety so your results are all valid and identical.

- You can have termination, so your algorithm will finish.

- You can have fault-tolerance. That is, the system can survive failure at any point.

(For those of you who are CAP theorists, this predates CAP.)

You’re allowed to pick two out of three of these, which is annoying because we all want three out of the three. There is a tradeoff between availability and reliability. Do you pick availability? Or, do you pick reliability? Because you can only choose one.

The right answer depends on your needs. There’s no one size fits all approach.

For example, most NoSQL databases have eventual consistency. So if you write a value into the database, at some point later in time all the replicas that hold that value will eventually agree.

There’s a window of opportunity where the replicas may be inconsistent but on the whole it’s towards consistency. The tradeoff we’re making there is that we can get potentially great write performance but we don’t get a reliable answer in terms of classical fault tolerance.

I think the distributed database community has done a lot of good stuff there. They’ve worked on things like clocks and timestamps; they’ve reasoned about causality; they’ve got CRDTs and mergeable data types; and there’s a lot of good stuff happening.

Ultimately, we all agree that a single value is replicated eventually to all of the replicas. In computer science terms, that’s a relatively known – and solved – problem.

Do we want BASE over ACID? No, but fortunately the transactions community has done a lot of work in the last few years that you can have highly concurrent, high-performance ACID transactions without sacrificing performance.

In graph databases though, the problem is a bit more difficult. For graphs, reliability is more important than availability.

You might say, ‘But I’ve heard about this other thing that just does…’ No. ‘But…’ No.

You can’t naïvely try to impose a graph model on a non-native database, because it doesn’t have the mechanical affordances to reliably store your data. That’s the sad ending to Episode V.

Episode VI: The Far Horizon of Graph Databases

Return of the Jedi is a bit more upbeat.

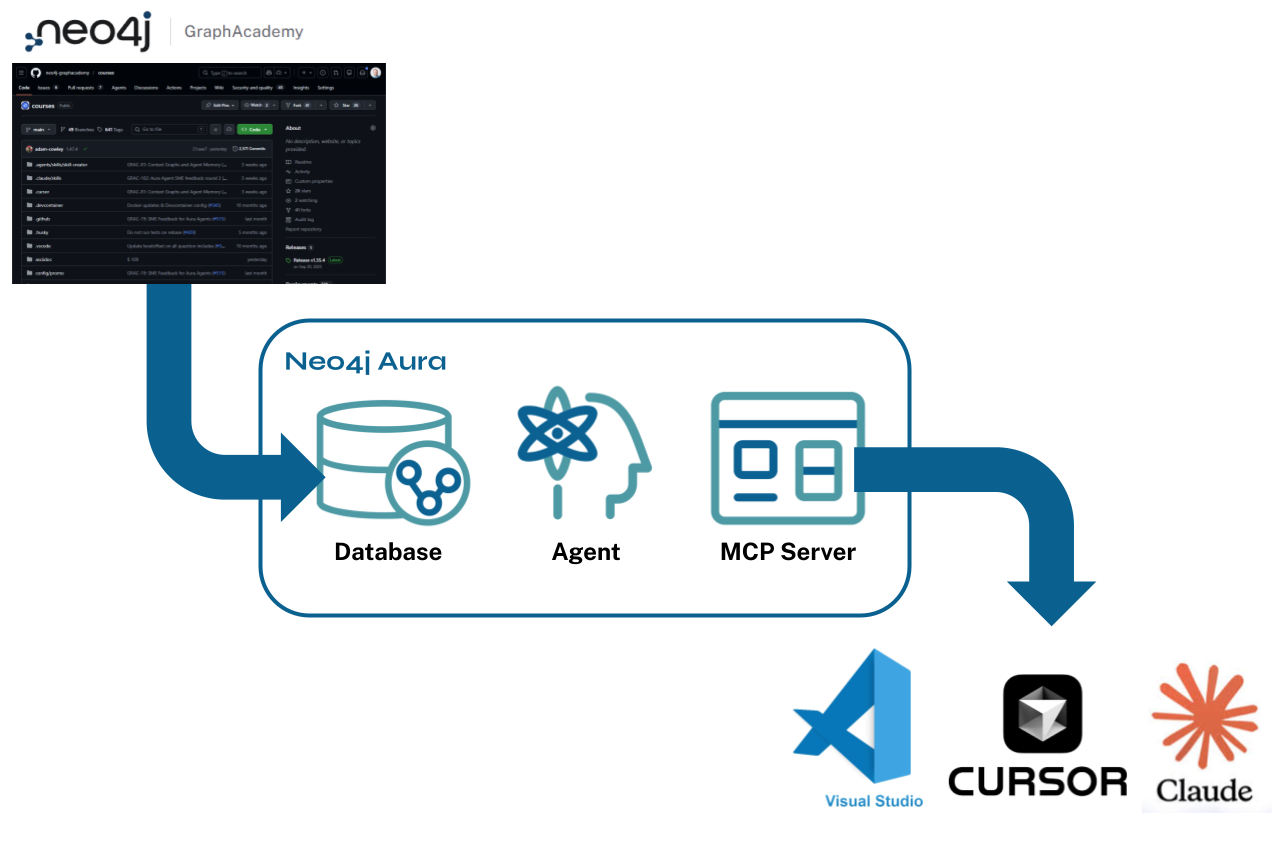

We’ve been doing a bunch of engineering work at Neo Technology on Neo4j.

We are working on larger databases. You’ve told us, ‘My ambition today is billions, but I want trillions tomorrow’. We can do that.

We’re going to work on even faster Cypher. The Cypher team has done some amazing things in terms of query planning and optimization. They’re now building a high-performance runtime for Cypher.

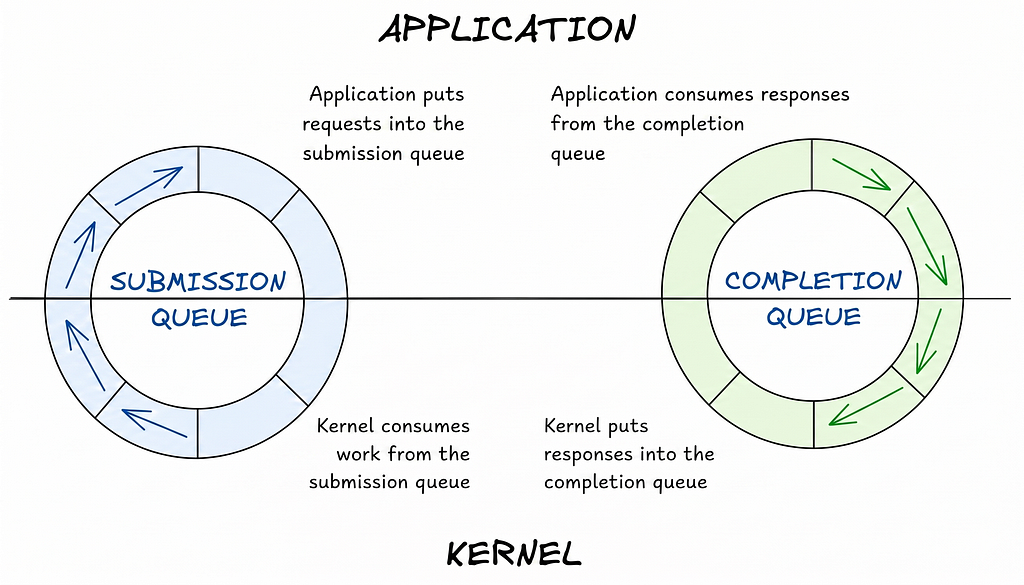

We’ve got better internals. If you’ve gone into production with Neo4j, you’ve seen that you get a high-performance lock manager. You also see our new cache built by our kernel team has provided a storming increase in performance. And in the near future, our engineers working on the binary remoting protocols are going to give you some absolutely sweet kick-ass drivers in your language.

You’re going to see bigger data, faster queries, more queries, better predictability and native high-throughput, low-latency drivers. And that’s just from the engineering side.

How? Because it’s graph-native all the way down.

We optimize for graph workloads because we are not depending on some other database to do the heavy lifting. This is all about going from the socket down to the disks and back in a way that is sympathetic to graphs. That’s what gives Neo4j its performance and reliability edge, and we like it that way.

Then we’ve got the sciency bit.

Because your ambition is not going to stay static, we’re going to give you the remoting stuff and you’re going to suck up that capacity because you’re going to get more ambitious so we need to be one step ahead of you so that your ambition continues to grow, and we continue to serve you.

Next we’re going to go with peer-to-peer clusters. We’re going to go for massively concurrent ACID transactions across those machines. We are not going to sacrifice the reliability of your database just because some people thought two-phase commit was slow.

We can have caches that are graph refined and understand locality. We’re going to have domain-specific partitioning. We’re going to have all of that and we’re going to do it reliably because in sacrificing reliability, all we get is corruption.

What we’re not going to try to do is beat CAP theorem. It’s a theorem, it’s not a conjecture anymore.

Actually, we think it’s better to design for reliability and accept that availability may be a casualty because availability doesn’t have to be a binary function. After all, even when an escalator has no power, it still functions as steps so you get graceful degradation of service.

This stuff is not easy. This a long-term trajectory. You can trust that as your ambition rises, our level of technology rises ahead of you, always giving you the headroom you need to build your ambitious systems. We do that because we built Neo4j as an ambitious system ourselves.

In fact, we don’t think this stuff is impossible because in the graph space today, you guys are already building systems that would have been thought impossible or impractical just a few years ago.

We want you to continue to raise your level of ambition so that you can build things that you think are impossible or impractical today. You’ve got to build those things tomorrow because we think impossible is not a fact, it’s an opinion.

Impossible is not a declaration, it’s a dare. Impossible is potential. Impossible is temporary. Impossible is nothing with graphs.

Inspired by Jim’s talk? Click below to register for GraphConnect San Francisco on October 21, 2015 at Pier 27 to learn more about the emerging world of graph databases — from enterprise customer stories to hands-on training and workshops.