Neo4j-Databricks Connector Delivers Deeper Insights, Faster GenAI Development

5 min read

In a hyper-connected, data-rich world, enterprises need to understand complex relationships within large, diverse datasets. Customer interactions, for example, involve tracking behavior, preferences, and patterns across online platforms, physical stores, and social media. Organizations must understand all these relationships to optimize strategies and improve the customer experience.

Today marks the introduction of a validated partner solution between Databricks and Neo4j. This connector will allow our joint customers to seamlessly combine structured and unstructured data, discover hidden patterns across billions of data connections, enhance contextual understanding within their data, and rapidly deliver enterprise-grade GenAI applications.

At Neo4j, we help organizations efficiently analyze the relationships within highly connected business data, even as data volumes grow. Neo4j use cases include fraud detection, supply chain and logistics, energy solutions, customer 360, and more. Developers using Neo4j with Databricks can now:

- Enhance analytics by ingesting data from Databricks to Neo4j. Create a seamless workflow to continuously process, analyze, and update data across both platforms, enabling real-time insights and decision-making.

- Uncover hidden patterns to generate deeper insights. Use Neo4j’s built-in graph algorithms and Cypher query language to uncover hidden patterns and deeper insights in data. Within Databricks notebooks, Neo4j Bloom and the Neo4j Visualization Library (NVL) can be used to explore data visually.

- Combine Neo4j knowledge graphs with graph retrieval-augmented generation (GraphRAG). Neo4j knowledge graphs improve RAG, resolving the critical issues of accuracy, explainability, and transparency – and unlocking GenAI’s full potential.

Here’s a closer look at how using Neo4j and Databricks together can deliver groundbreaking analytics and GenAI outcomes.

Enhancing Analytics by Ingesting Data From Databricks to Neo4j

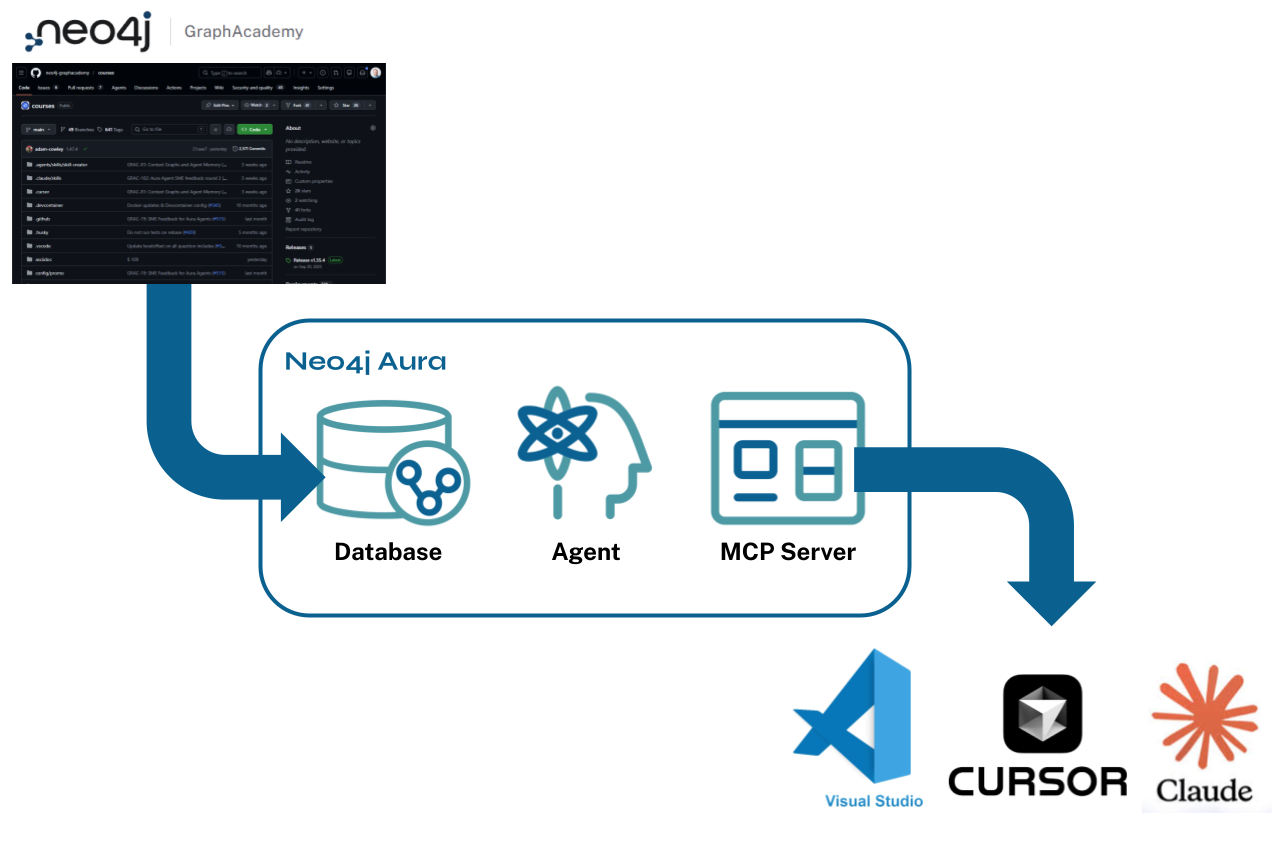

The Neo4j Connector for Databricks seamlessly transfers data to Neo4j for analysis in a graph structure. The Neo4j Graph Database excels at handling interconnected data, making it an ideal platform for analyzing complex relationships and patterns. The connector can be used to read data from and write data to Delta tables from a Databricks notebook.

A code snippet that shows the options provided by the Connector with Databricks to ingest data into Neo4j to create nodes, labels, properties, and relationships.

Delta Lake and Neo4j are both ACID-compliant systems, which means they ensure data consistency, reliability, and integrity throughout the data pipeline.

For example, Delta tables are part of Delta Lake, an open-source storage layer that brings ACID transactions to Apache Spark and big data workloads. Neo4j and Delta Lake efficiently handle complex queries and real-time insights, enabling scalability by handling petabytes of data, which ensures data consistency and reliability through ACID transactions and optimizes data reads and writes with features like indexing and caching.

We built our Databricks connector with data availability and security in mind. Neo4j Aura has a 99.95% uptime SLA for real-time applications and complies with industry-standard regulations such as ISO 27001, GDPR, CCPA, SOC2, and HIPAA. To ensure that only approved data is analyzed, we are supported by Databricks Unity Catalog, as our connector can access the Databricks data layer through Databricks’ access control mechanisms. We also integrate with SSO providers like Microsoft Azure AD and Okta, offer encryption at rest through customer-managed keys (CMK), and employ role-based access control (RBAC) to safeguard access.

Uncovering Hidden Patterns in Data to Generate Deeper Insights

Neo4j Graph Database offers a developer-friendly schema that enables easy prototyping and evolution of data models from development to production. The property graph model allows storing properties directly within the graph, providing a powerful yet simple approach for architects to design and build graph models. This makes it easy to conceptualize and transition from whiteboard designs to actual implementations. A graph built on a Neo4j graph database combines transactional data, organizational data, and vector embeddings in a single database, simplifying the overall application design.

A native graph database allows users to quickly traverse through connections in their data, without the overhead of performing joins and with index lookups for each move across a relationship or other join strategies. We call this capability index-free adjacency – each node directly references its adjacent (neighboring) nodes, so accessing relationships and related data involves a simple memory pointer lookup. This makes native graph processing time proportional to the amount of data processed – it doesn’t increase exponentially with the number of relationships traversed and hops navigated.

Developers can also use pre-built graph algorithms and the Cypher query language to find patterns in data. Centrality, pathfinding, similarity, and many other Neo4j algorithms are useful for recommendation engines, supply chain optimization, identity and access management, and network monitoring.

Key use cases for Neo4j Graph Algorithms

Unlock the Potential of GenAI With Knowledge Graphs and Retrieval-Augmented Generation (RAG)

It’s hard to overstate the importance of knowledge graphs in GenAI development. Gartner considers knowledge graphs essential to the development of GenAI, and has urged data leaders to “leverage the power of LLMs with the robustness of knowledge graphs to build fault-tolerant AI applications.”

As the widespread use of GenAI has driven demand for better responses, knowledge graphs have excelled at improving LLM accuracy, relevance, and transparency. Knowledge graphs ground LLMs by representing relationships within data – which contextualizes responses – and by integrating both structured and unstructured data.

Through a technique called GraphRAG, LLMs retrieve relevant information from a knowledge graph using vector and semantic search and then augment their responses with the contextual data in the knowledge graph. Microsoft researchers have found that LLMs using GraphRAG not only deliver more comprehensive and explainable answers but also a greater diversity of viewpoints.

Developers using Databricks can accelerate GenAI app development by seamlessly incorporating GraphRAG capabilities into their projects. A variety of integrations make it easy to access popular AI frameworks and tools like LangChain and LlamaIndex.

GraphRAG for GenAI applications

Adding Critical Analytics and GenAI Capabilities to the Databricks Experience

Extracting insights from densely interconnected datasets and accelerating GenAI development are critical priorities for the modern enterprise. Our new integration with Databricks is specifically designed to help organizations meet these challenges – and to stay ahead of the GenAI and data analytics curve for years to come.

To get started with Neo4j on Databricks, read the quickstart documentation and get your projects started on Aura.