Neo4j Graph Data Science Library 1.6: Fastest Path to Production

Senior Director of Product Management, Graph Data Science

3 min read

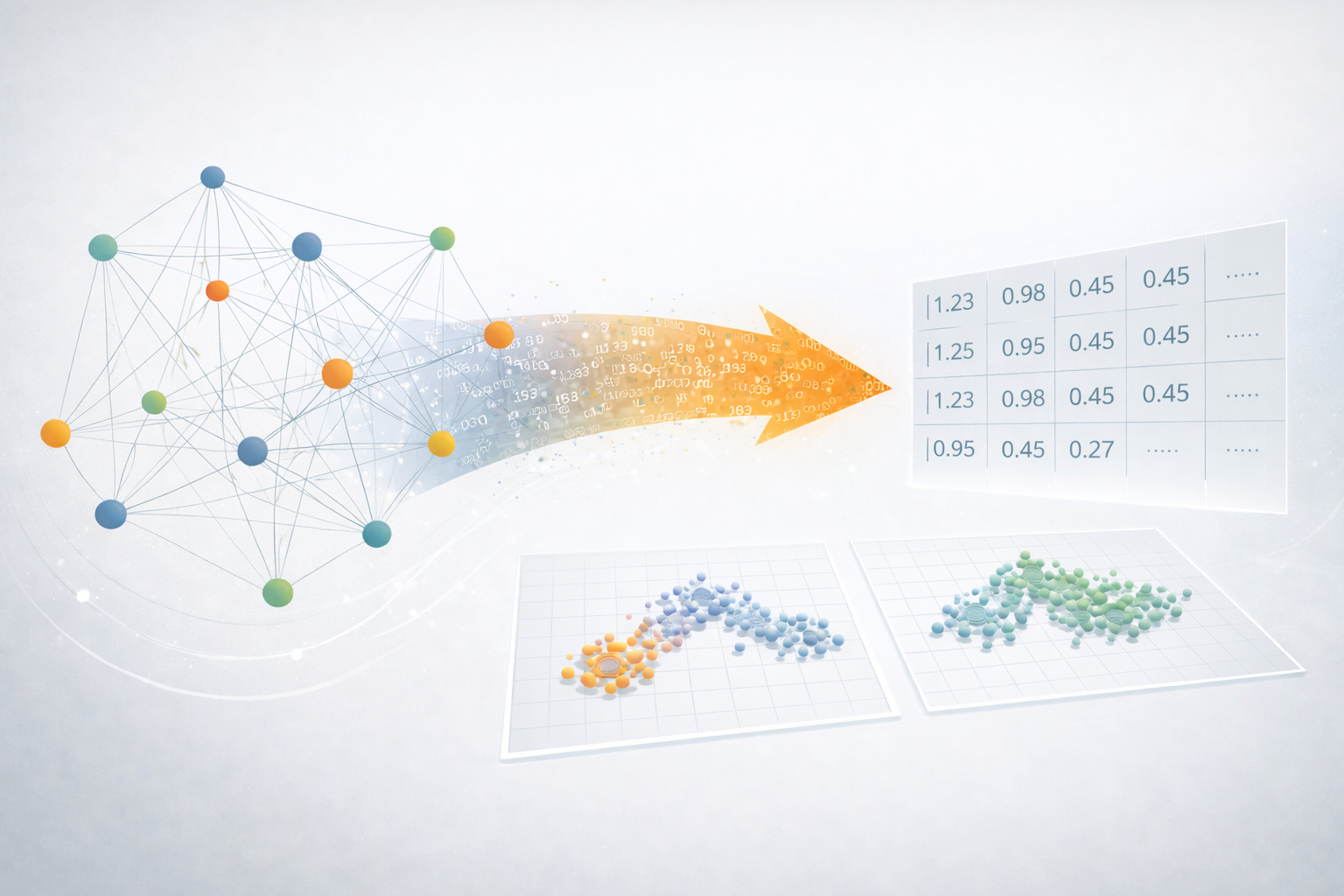

We’ve just released the 1.6 version of the Graph Data Science (GDS) Library, and we’ve spent this release focused on how to reduce friction of getting graph data science results into production. We’ve improved our machine learning procedures, added enhancements to our graph embeddings, and added new features to better support production ML – like scaling functions and the ability to filter your analytics graph.

Before diving into the details though – we also wanted to give something back to the community in this release:

Community Edition GDS users can now train and store up to 3 ML models in Neo4j!

As cool as new features are, the ability to train and store more models in our community edition should hopefully make the library more useful to many of you, who are just getting started.

We introduced the idea of training a model in GDS 1.4 – for GraphSAGE – and expanded what you could do by adding in Node Classification and Link Prediction.

Now, we’d like to thank our community members for testing our new features, giving us feedback, and helping us build a better product. With the ability to train more models, it will be easier for you to use our supervised ML capabilities: train and compare different models and parameter combinations, identify valuable features, and to find value quickly with GDS.

The other big features in this release are designed to make your data science experience in Neo4j simpler:

Scaling and Normalizing Properties

We added a new ScaleProperties procedure to transform and scale node properties – so you don’t have to write back to the database and use expensive cypher queries to normalize data before training your models. We now support min-max, max, mean, log, standard score, L1 and L2 norm scalers.

Graph Filtering / Subgraph Projections

This has been one of the most requested features since our first release, and allows you to create subgraphs from your in-memory graph by filtering on node or relationship properties. This lets you, for example, run a community detection algorithm and split out new in-memory graphs for each community, and then run embeddings on each individually, or you could calculate degree centrality and create a new graph filtering out the highest degree nodes, to improve your performance.

Improved Graph Embeddings

Node2Vec graduated to the beta tier in this release, and is substantially faster (up to 80% faster in our tests!), and now supports weighted graphs, seeding (for reproducibility), and mutate mode – so you can update your in memory graph with your node2vec results and keep working. We’ve also added seeding to FastRP and improved the accuracy of graphSage.

Better Supervised ML Pipelines

We introduced NodeClassifications and LinkPrediction in GDS 1.5, and in this release we’ve added support for saving, publishing, and restoring trained models (Enterprise only), as well as estimation functions, and support for stream and write modes, as well as new metrics for model performance.

Administrative Capabilities

For our enterprise users, we’ve added the ability for administrators to view, use, and delete graphs and models from any user. This supports MLOps tasks and allows an administrator to manage resources on a system with multiple users without logging in to their accounts.

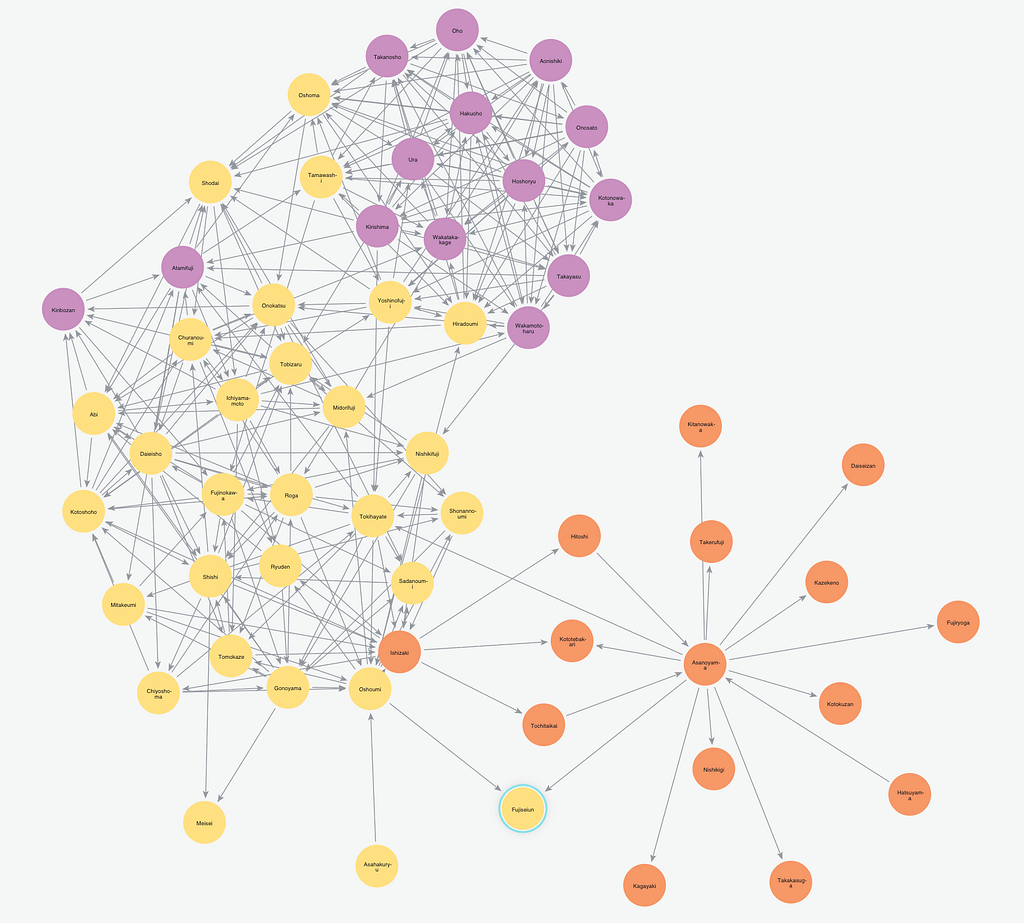

The last thing we’d like to highlight in this release – but definitely not the least! – is that we have two new centrality algorithms for Influence Maximization. These algorithms are intended to identify the nodes which can trigger cascading changes in your graph. For example, who would you target for a viral marketing campaign? Or, in the case of a disease outbreak, who are the most important people to quarantine or treat?

What’s even more exciting, though, is that we didn’t implement these algorithms – they were contributed by our community member, @xkitsios. He implemented two different approaches (Greedy and CELF) for identifying the k-most influential nodes in a graph, and we were thrilled to be able to include his code in our latest release.

There’s a ton more that I haven’t mentioned – you can always check out our release notes – but, as always, please let us know what you think by posting on our community forum or opening an issue on our github.