It’s fun to watch the graph database category evolve. From being a seemingly niche category a decade ago (despite the valiant efforts of the Semantic Web community) to a modest – but important – pillar of the data world as it is today.

But as the category has grown, we see some technical folks who attempt to “educate” engineers on artificial distinctions as if they were real and factual.

An example of this that I encounter more often nowadays is graph support in non-native graph databases. Obviously graph database technology is tremendously exciting and remains the high-growth area of the database market with much potential to fulfil.

The non-graph vendors are wise to be testing the water in this market. After all, they’ve done very well in their native markets, in some cases creating whole new categories around document or column storage. Providing support for graphs is becoming a necessity to meet the needs of their investors and analysts who dominate our space.

Only problem is: they don’t have a native graph database.

On the Concept of Native Computing

When it comes to data models, native counts. Any database will have a particular workload that dominates. Whether that workload is column storage, documents or graph traversals, the design of the database is overwhelmingly influenced by its major use case.

If we take the opportunity to review Wikipedia’s native computing article:

Applied to data, native data formats or communication protocols are those supported by a certain computer hardware or software, with maximal consistency and minimal amount of additional components.

This is a reasonable definition and reductively it states a system, such as a database, performs its function with a minimum of additional components working together. In doing so, we hope to produce an efficient and dependable system.

The antithesis is an inconsistent jumble of components, or layers of components, each supporting a different kind of functionality whose dependability characteristics and efficiency are far from optimal. Yet, many vendors choose this course either through acquisition or feature extension for fear of being left out of the conversation. Such is the state of multi-model databases.

Native isn’t restricted to graph technology of course: it’s a trait of any system carefully designed to do its job well. But what’s native for one system is non-native for another.

For example, if I’m designing a relational database, I’ll choose different algorithms and data structures than if I’m designing a column store. Similarly, if I’m designing a graph database, I’ll make different design choices than if I was designing a document store. In each case, the design I choose is optimized for the intended workload of the system.

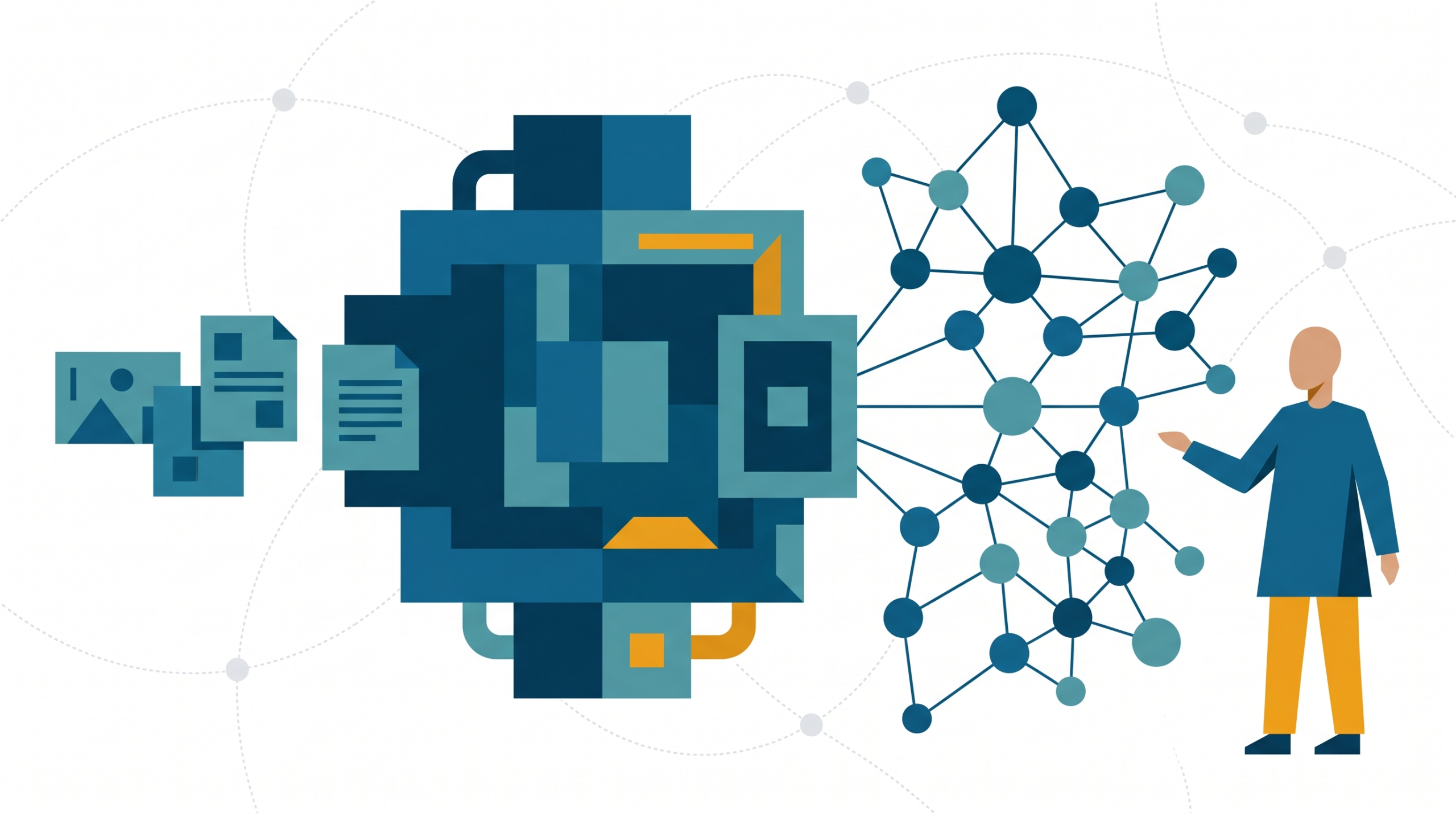

The purpose of a graph database is to store graphs safely and query them efficiently. A system can be said to be a native graph database if it achieves these objectives consistently and without the aid of other components.

Neo4j achieves native graph database status because it’s designed for graph workloads at every level. Its storage, memory management, query engine and language – even our visuals and whiteboard drawings – all support the safe storage and efficient query of graph data, consistently and without the aid of other components. We are connections-first all the time.

The Non-Native Approaches

The non-native approaches we see in the market cannot meet this definition of being native. They do not consistently process graphs and have the need for other excess components – usually an entire database management system (DBMS) onto which they graft their non-native graph model.

We see two of these approaches in the market, both of which violate one or another of our fundamental tenets:

Graph Layer

The graph layer approach takes an existing DBMS (e.g., a column store) and layers a graph API on top with some bindings to the underlying DBMS. Functionally, the graph layer subsumes the underlying DBMS and provides users with a graph API through which they interact with the database.

However, this fails our native test because it requires a multiplicity of components (requiring a whole DBMS is a big component!). Furthermore, in terms of consistency, we must now toggle our mind-sets between the graph view of the world and the underlying native storage model.

Worse, the design of the mapping has unpredictable results. Should we denormalize for depth 3 or depth 300? And the underlying DBMS – being designed for one-off lookups of columns – doesn’t have the kinds of graph locality or inexpensive traversals as part of its engine: performance suffers through inefficiency. The database wags the graph.

Graph Operator

The graph operator approach is different. Here a small amount of graph vocabulary (an operator) is added to the query language of an existing database such as a document store. Somewhat enriched by that operator, end users now have a limited way of expressing a small number of basic graph workloads, providing certain conventions are upheld in the way the database is used.

This also fails our native test: we have an inconsistent query language that simultaneously complicates a rich native model and debases its impoverished graph add-on. Worse, the inconsistency becomes a user-level problem because the DBMS, though equipped with a graph operator, does not itself understand graphs. Instead, users must self-design and uphold conventions about how to specify links within the native data model so that they can be seen and processed by the operator.

Consequently, users using the database quite legitimately can do so in ways that damage the ability of the graph operator to perform its job correctly. Removing links and changing the nature of the graph (e.g., its span) is one immediate problem.

Worse though is that because the underlying DBMS is blissfully unaware of those links, and offers no transactionality, there can be no enforcement of commonplace rules like, “no dangling relationships.” Ultimately this is logical data corruption, and a waste of a good graph idea.

The Composition of Models

So much investment has been directed at the native models of those other databases. And sizeable market categories have been created. But it is not easy to compose models sympathetically: the DBMS that is amazing for columns is not going to provide low-level traversal performance or transactional guarantees for graph data. The DBMS that rules for documents at scale is going to seize up when forced to implement referential integrity checking or keeping deep graph query results in memory.

Philosophically those vendors want the graph ‘tick box’ on RFPs so they can state that they address the market. But graphs are a hobby for them, not a profession. As I’ve argued, this manifests in poor and unpredictable performance, complex operators, inexpressive and narrow query languages, and even data corruptions – all just enough to claim to be ‘graph’ superficially.

We at Neo4j don’t believe that graphs are a hobby. We believe they are the most important and beneficial approach to-date for making sense of the world’s data. And, as such, we believe they deserve a native DBMS to manage them.

Conclusion

The intention of this post was to point out the challenges that non-native graph databases face, and to vindicate Neo4j from arbitrary vendor marketing and from graph hobbyists. The arguments presented are based on well-understood software design and computer science.

If you’d like to know more about the design of native graph data technology, or Neo4j in general there’s a plethora of material available. Our best resources are below: