Faster, Smarter, Better: Optimizations for Neo4j Graph Algorithms

7 min read

We’re happy to share recent improvements to the Neo4j Graph Algorithms library. These updates include optimizations at several layers, improved configuration and usability, as well as specific feature requests.

A big “thank you” to those who provided suggestions on how to better serve data scientists in production environments, ranging from graph analytics to graph-enhanced machine learning!

In this post, we’ll summarize the operational improvements from 3.5.6.1 through 3.5.9.0. We’ll also dive into a bit more detail on specific algorithm enhancements.

You can install the latest algorithms library directly from the Neo4j Desktop in the ‘Plugins’ section of your project or on the Neo4j Download Center.

Neo4j Graph Algorithms Library Infrastructure Improvements

The infrastructure improvements involved a few visible changes, such as helping users plan for resource requirements and providing more baseline graph information, as well as changes “under the hood” to improve performance and stomp out a few bugs.

Compute Memory Requirements Ahead of Time

The most anticipated change in overall operations is the ability to compute memory requirements ahead of time. The algo.memrec procedure determines memory needs for loading a graph (or Cypher projection) into memory and for running specific algorithms: PageRank, Label Propagation, Connected Components (Union Find) and Louvain.

This feature is super handy for getting the memory configuration right. Because Neo4j graph algorithms run completely in heap memory, the need for running analytics workloads is likely different than your transaction workloads.

Memrec allows you to anticipate the memory that will be used to load your graph or run your algorithms, and should be used as a first step to make sure your configuration is appropriate. More detail on the memrec procedure is detailed in this post on Neo4j Graph Algorithms Release.

Specify Different Concurrencies

We’ve also split out the concurrency configuration for algorithms, allowing users to specify different concurrencies for reading data into memory and for writing results back to the graph after an algorithm completes. There’s more detail in the above mentioned blog post, but this enables users to more finely tune their environment for different uses and goals.

Conduct Faster Reads/Writes

To increase overall performance, we’ve switched how we encode relationship weights in the in-memory graph; from using an array map to a parallel array. This change results in faster read/write speeds for weighted relationships and a lower memory footprint.

Load Graphs More Efficiently

The default graph loader has been updated from heavy to huge graphs with optimizations and bug fixes that have drastically accelerated node loading. The huge graph loader also now supports relationship deduplication when duplicateRelationships is configured.

Use Smarter Information

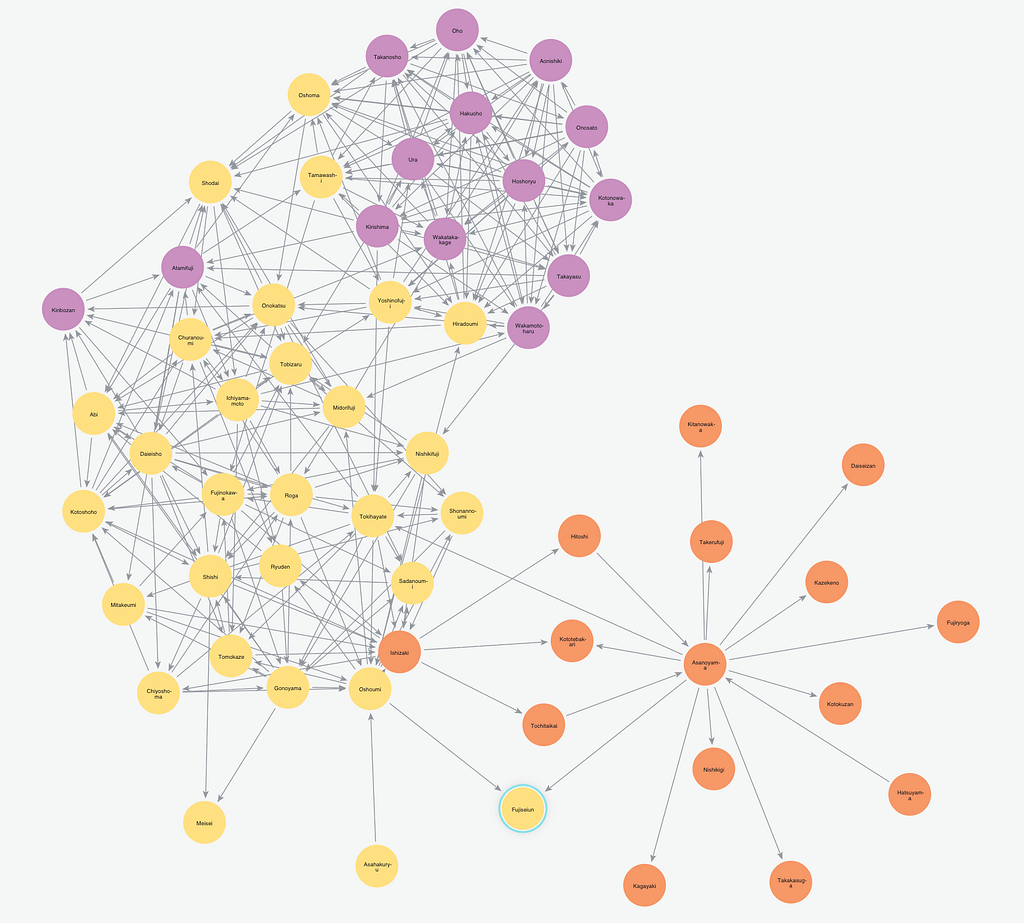

To simplify gathering basic information about our graphs, we added relationship counts and degree distribution statistics to the procedures for graph loading and graph info. We include the min/max and mean number of relationships well as the number of nodes in a range of degree percentiles.

Data scientists can use this type of data to understand relationship densities and distributions, which can impact algorithm choices and results. It’s also a quick way to check if the graph loaded as you expected and makes debugging simpler and more straightforward.

We have also added a new procedure, algo.graph.list(), that returns a list of the loaded graphs with their basic information. This is especially helpful when you’ve loaded many named graphs and can’t remember the names or even how many you have. (We’ve all been there!)

With the named graph list, house cleaning becomes much simpler because you can remove your extra graphs without having to restart the database.

Finally, there are also numerous other improvements from better error handling and bug fixes, to additional information that enhance usability. For example, algorithms will not execute when the provided node labels or relationship types don’t exist, and instead return an instructive error.

Neo4j Graph Algorithms Library Algorithm Enhancements

In regards to the algorithms themselves, we’ve made some general enhancements to accelerate results, as well as enterprise-grade optimizations for our product supported algorithms (PageRank, UnionFind /Connected Components, Louvain and Label Propagation). This aligns with our dual strategy to have many new algorithms created by our Labs team and a set of core algorithms with enterprise features supported and developed by our product engineering team.

As the product team implements new algorithms or variants of existing algorithms, we are giving users early access to these new features, via the added a beta namespace (algo.beta.

Whenever new beta features are rolled out, these will be explained in the release notes. As we test and mature beta algos, they will eventually mature into the primary namespace.

General Algorithm Improvements

Users can now terminate product-supported algorithms during graph loading and result write. Previously, termination was only enabled during the actual computation of the algorithm. Algorithms may be terminated by ctrl-c in Cypher shell or clicking (x) in Neo4j browser.

We’ve also increased histogram accuracy in community detection algorithms by increasing the number of significant digits to 5 (from 2) – enabling us to give accurate results for the 100th percentile of nodes per communities. For those that do not want the histogram output, you can prune down the statistical results using the Yield clause.

PageRank

The PageRank algorithm now includes a tolerance parameter, which allows the user to run PageRank until values stabilize within the specified tolerance window. PageRank usually converges on results after enough iterations; in some scenarios it’s not mathematically possible, and in other cases it would simply take too long.

So when we only use an iteration limit, we are left to hope values converge in that iteration window and that we are not iterating unnecessarily and wasting time. Alternatively, tolerance allows users to terminate pageRank as soon as values have stabilized. This gives better results as well as potentially decreasing calculation times.

Previous versions allowed users to specify the number of iterations, which provides performance consistency. Using both a tolerance parameter and a maximum number of iterations is a best practice to balance and tune for accuracy and performance needs.

Label Propagation

The Label Propagation algorithm now has the option to produce identical results when run multiple times on the same graph. The initial start node is seeded and ties are broken by selecting the smaller community label. These are both configurable parameters via the setting seedProperty.

This option enables users to choose either a traditional approach which selects start nodes and breaks ties randomly or a deterministic approach. This change helps users in production settings where consistency is important.

As part of this update, the default parameter values for seeding and writing have been removed and users must specify the seedProperty (seedProperty is the new name for the former partitionProperty parameter.)

To prevent accidental overwrites (when users run an algorithm multiple times on the same graph and incorrectly write results back to the same property), we now require users to specify a value for the writeProperty parameter. If no writeProperty is specified, results will not be written back to the graph.

Label Propagation is the first algorithm with a beta implementation (algo.beta.labelPropagation), which previews the new syntax for seeding labels.

Connected Components (Union Find)

We have a new parallel implementation of Connected Components (Union Find) as makes better use of the available threads and consumes less memory.

These improvements are all included under algo.unionFind and obviate the need for previous experimental variations (algo.unionFind.forkJoin, algo.unionFind.forkJoinMerge, algo.unionFind.queue) which have been deprecated. Union Find is now significantly faster and requires less memory in heap to complete.

Like Label Propagation, the Union Find algorithm now also has an added option for a seedProperty parameter to set initial partition values. This enables users to preserve the original community IDs even after executing the algorithm multiple times and with the addition of new data.

To make seeding efficient, it runs concurrently on multiple threads and only writes on changed/new properties. This feature was built specifically for users who need to run Label Propagation on the same data set – with new data added incrementally – in an efficient way.

We also added a consecutiveIds parameter to allow users to specify that partitions should be labeled with successive integers. This eliminates numeric gaps in our community ID labels for easier reading.

Louvain Modularity

The Louvain Modularity algorithm has several optimizations to increase performance.

We optimized the way two internal data structures are populated, which reduces run time, and have reduced the algorithm’s memory footprint. We also removed some indirection to streamline processes and reduced the time taken to read relationship weights.

Conclusion

It’s exciting to see data scientists test the limits (and sometimes beyond!) of what can be accomplished using graph algorithms. We hope you find these recent optimization beneficial and put the new features to good use.

And as always, please let us know how we can continue to improve, what capabilities you need or new algorithms should be included!