Neo4j’s Vector Search: Unlocking Deeper Insights for AI-Powered Applications

Chief Product Officer, Neo4j

3 min read

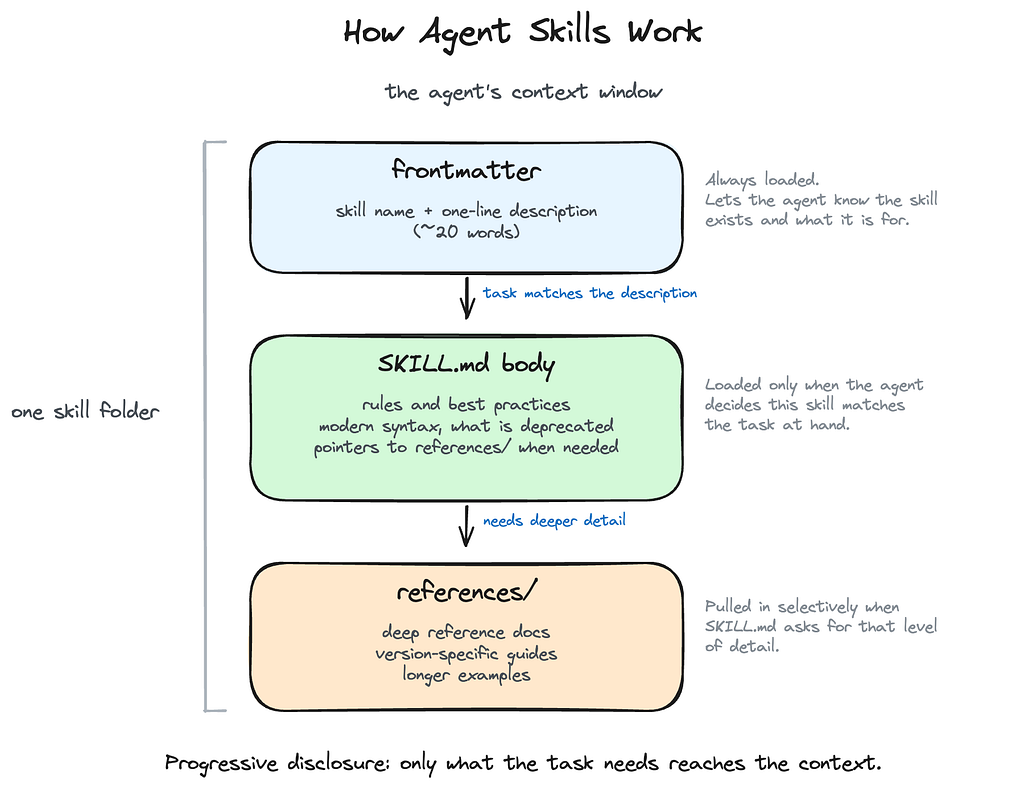

Today marks an exciting new milestone for Neo4j with the introduction of native vector search. Available to all Neo4j customers, vector search provides a simple approach for quickly finding contextually related information and, in turn, helps teams uncover hidden relationships. This powerful capability, now fully integrated into Neo4j AuraDB and Neo4j Graph Database, enables customers to use vector search to achieve richer insights from generative AI applications by understanding the meaning behind words rather than matching keywords.

For example, a query about budget-friendly cameras for teenagers will return low-cost, durable options in fun colors by interpreting the contextual meaning. That question would be turned into a vector embedding by an text-embedding model and passed into the Cypher query for the search.

// find products by similarity search in vector index

CALL db.index.vector.queryNodes('products', 5, $embedding) yield node as product, score

// enrich with additional explicit relationships from the knowledge graph

MATCH (product)-[:HAS_CATEGORY]->(cat), (product)-[:BY_BRAND]->(brand)

OPTIONAL MATCH (product)<-[:BOUGHT]-(customer)-[rated:RATED]->(product)

WHERE rated.rating = 5

OPTIONAL MATCH (product)-[:HAS_REVIEW]->(review)<-[:WROTE]-(customer)

RETURN product.Name, product.Description, brand.Name, cat.Name,

collect(review { .Date, .Text })[0..5] as reviews

Vector search, combined with knowledge graphs, is a critical capability for grounding LLMs to improve the accuracy of responses. Grounding is the process of providing the LLM relevant information about the answers to user questions before it creates and returns a response. Grounding LLMs with a Neo4j knowledge graph improves accuracy, context, and explainability by bringing factual responses (explicit) and contextually relevant (implicit) responses to the LLM. This combination provides users with the most relevant and contextually accurate responses.

Grounding LLMs with Neo4j improves accuracy of enterprise-wide applications and furthers key use cases like:

- Enhanced fraud detection: Vector search can improve the breadth of fraud detection by capturing implicit relationships that have not been modeled in the graph.

- Personalized recommendations: Vector search considers the context of the user’s query and preferences, providing personalized and relevant recommendations by presenting answers with similar specifications and features tailored to the user.

- Discovery of new answers: Vector search results can reveal non-obvious yet pertinent answers with similar attributes that users may not have initially considered, expanding their choices and deepening insights.

Bringing Vector Search Into Neo4j

With the machine learning model of your choice, you can encode any data type, like documents, video, audio, images, etc. as a vector. Neo4j now allows users to create a vector index that performs fast approximate k-nearest neighbor (KNN) searches using either cosine or euclidean similarity. Our new vector indexing capability uses an algorithm called Hierarchical Navigable Small World (HNSW) to identify similar vectors efficiently. The more similar the vectors, the higher the relevance. In the Neo4j Graph Database, vector indexes can be created on node properties containing embeddings of unstructured data.

The ROI of Vector Search From Neo4j Customers

Early adopter customers are already seeing the potential of Neo4j’s vector search in knowledge graphs and AI applications, with promising results. For example:

- In the pharmaceutical industry, one customer integrated vector search into its knowledge graph, slashing regulatory report automation time by 75% using context-aware entity linking.

- For an insurance firm, vector search and knowledge graphs delivered 90% faster customer inquiry response.

- And a global bank boosted legal contract review efficiency 46% by deploying vector similarity search to precisely extract key clauses across massive document sets.

These applications demonstrate the vast potential of combining vector searches with knowledge graphs to drive transformative efficiency improvements across sectors.

At Neo4j, relationships drive our technology, and vector indexing is the latest example of how we enable deeper understanding. Blending precise knowledge graphs with contextual vectors creates opportunities to spark breakthroughs and uncover hidden relationships. As pioneers in Graph Database & Analytics, we’ll continue forging innovations through connections. Now it’s your turn – we’re excited to see what you build.