From recall to reasoning: How context graphs upgrade an agent’s brain

Product Manager, Neo4j

11 min read

Despite smarter LLMs and the growing availability of tools, AI agents seem to hit an evolutionary ceiling. They hallucinate, repeat mistakes, and struggle to adapt to new environments.

The root cause? Their brains aren’t built for reasoning.

While agents can recall the what, they struggle to grasp the why. An unstructured memory leads them straight into a context wall, where simple retrieval fails because the semantic links and logic are missing.

To break this wall, agents need more than a filing cabinet of memories. They need a context graph. Instead of a disconnected set of facts, a context graph is a web of knowledge that maps the relationships between the environment, the decisions made, and the outcomes. It is the bridge from recall to reasoning.

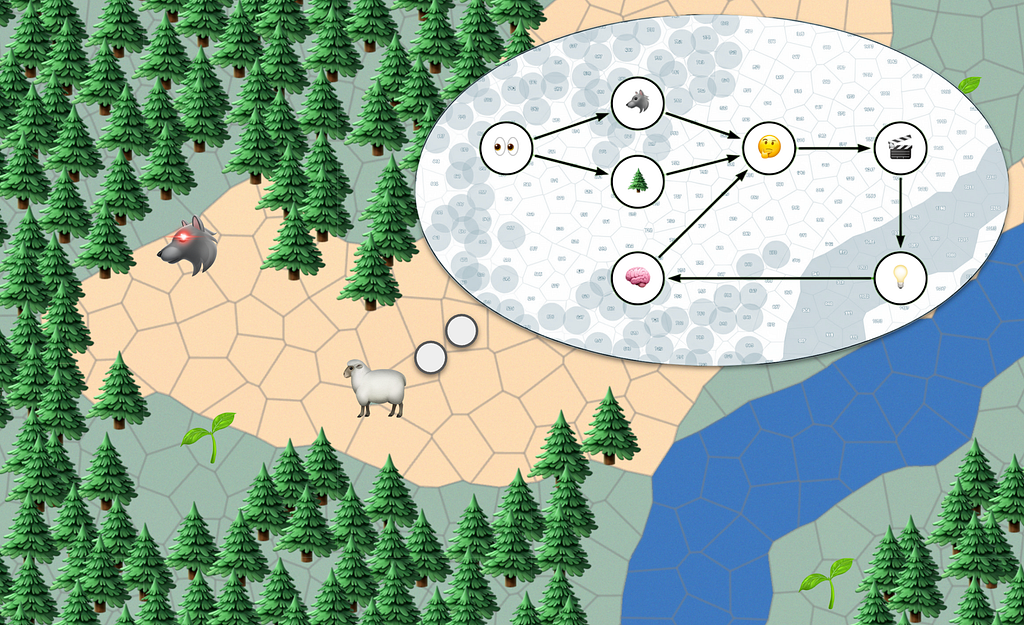

To understand why agents bump into the context wall, let’s observe an AI agent in the wild. This blog post is the story of how an agentic sheep 🐑 ventures through a digital forest, and evolves to three levels:

- Level 1: Agent with short-term memory (Reactive)

- Level 2: Agent with long-term memory (Recall)

- Level 3: Agent with contextual memory (Reasoning)

We’ll see that only with contextual memory, our sheep is enabled to truly learn, and eventually outsmart the wolves.

Introducing the agentic sheep

To understand how agents evolve, we need a test subject. Let’s simulate a single, hungry protagonist: a sheep.

Just like enterprise agents operate within the world of a business, the sheep’s world is a forest. The forest has open land to traverse, grass to eat, and trees that block the way. We can model this forest as a graph, a network of connected locations.

- Nodes: Represent specific locations (Grass, Sand, Ocean, Trees).

- Edges: Represent the paths (Relationships) between those locations.

This forest is an example of a world model. In real life, you could call this a digital twin: imagine a company mapped by its people, processes, and products. Within this world, our sheep explores autonomously by operating on a classic agentic loop:

- Observe its surroundings.

- Decide what to do next.

- Act and take the next step.

Level 1: The reactive agent (short-term memory)

The first evolution of our sheep agent contains three core components:

1. Prompt instructions. This is where we tell the sheep its instinct. A craving for food. To model this, the sheep has an energy level, ranging from 0 (starving) to 100 (satiated). Every minute, its metabolism decreases energy by 5. Eating grass recovers energy. If energy hits 0, the simulation ends.

2. Tools. Tools help the sheep interact with its environment. Just like a chatbot has tools for searching the web or creating images, the sheep can:

- [Look around] Observes the environment within a certain distance. A sheep cannot see through trees. Cost: 0 energy.

- [Walk] Move to an adjacent tile. Cost: 5 energy.

- [Eat] Consume grass to restore energy to 100.

- [Rest] Stay put. Cost: 0 energy (metabolism still applies).

3. Context window. The context window will contain the sheep’s short term memory (like a limited chat history), allowing it to react to observations and actions that happened. The size of the window is limited.

With the agent ready, we can now see our sheep in action.

Simulating the agentic sheep

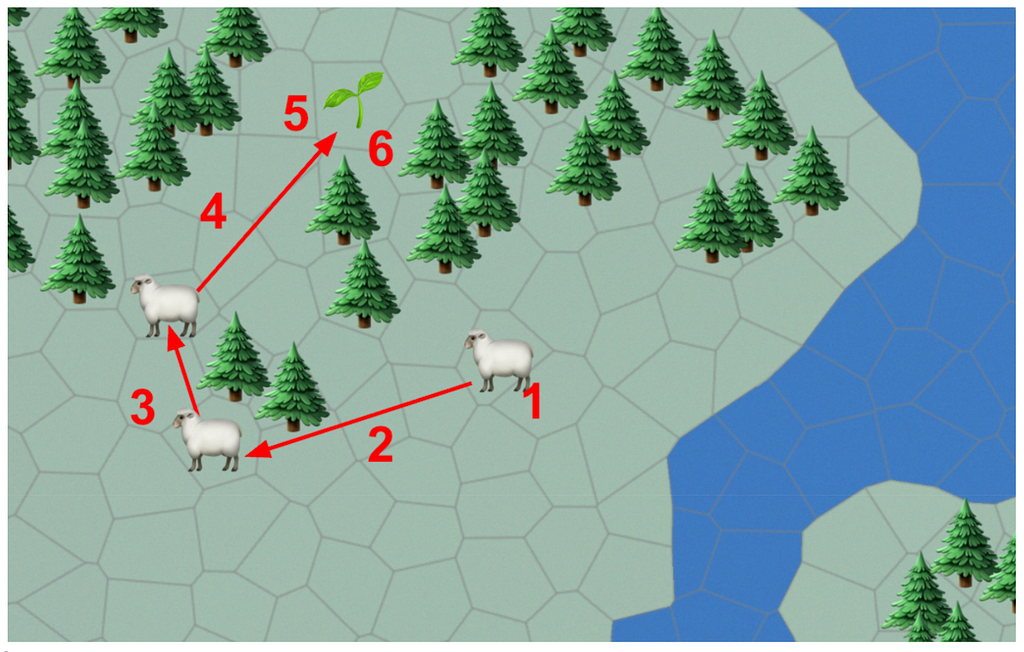

It gets more interesting when there is no food in sight. The agent will autonomously decide to explore:

So far so good. But life is hardly this easy.

Introducing the agentic wolf 🐺

The sheep isn’t the only creature roaming the forests. The wolf agent operates on the same core loop, but with more malicious intent. Just like the sheep, the wolf is driven by its instincts and available actions.

Prompt instructions. The wolf’s energy ranges from 0 to 100. Every minute, standard metabolism decreases energy by 5. If energy hits 0, the simulation ends for the wolf.

Tools.

- [Look around] Observes the environment within a maximum distance. Wolves have superior vision and can see through the leaves of trees. Cost: 0 energy.

- [Walk] Move to an adjacent tile. Cost: 5 energy.

- [Sprint] Run to a tile two steps away. Cost: 25 energy.

- [Eat] Consume a sheep to restore energy to 100.

- [Rest] Stay put. Cost: 0 energy.

We also expand the sheep’s prompt instructions (instinct) with one new rule: “If the wolf catches me, my energy level is set to 0, and the game is over.”

Based on these mechanics, we can infer two things:

- Wolves have the element of surprise. Because they can see through the leaves of trees, they can spot the sheep before the sheep sees them.

- Wolves are sprinters, not marathon runners. Sprinting burns massive energy (-25). In a long chase, the wolf will rapidly run out of energy, meaning a sheep can theoretically survive if it gets a head start.

Upgrading to level 2

The level 1 agent is quite basic. Its biggest flaw is that it cannot learn: if it runs into an area with wolves one day, the next day it will make the same mistake. And one day it might be too late. A sheep should thus be able to remember:

- “I saw a wolf in this area. I should stay away from here.”

- “This is a nice area. There’s lots of grass, I should remember to go here.”

This means that besides looking around, walking, eating, and resting, we should now add a new tool into our agent.

- [Remember] Access the sheep’s memory to store or recall information.

The memory should contain where the sheep has been (observations), and what it did (actions). In the world of a chatbot agent, this would be the questions and answers.

For the sake of our story, memory is a list of embeddings, vector representations of observations and what the resulting action was.

Our upgraded sheep’s brain is now able to record long term memories.

The level 2 agent in action

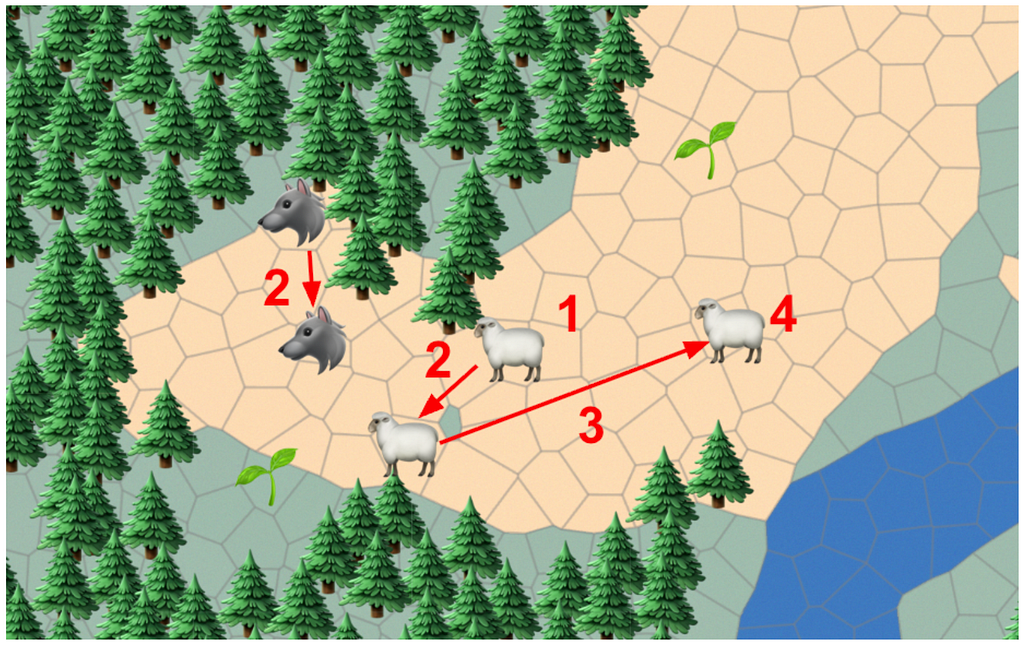

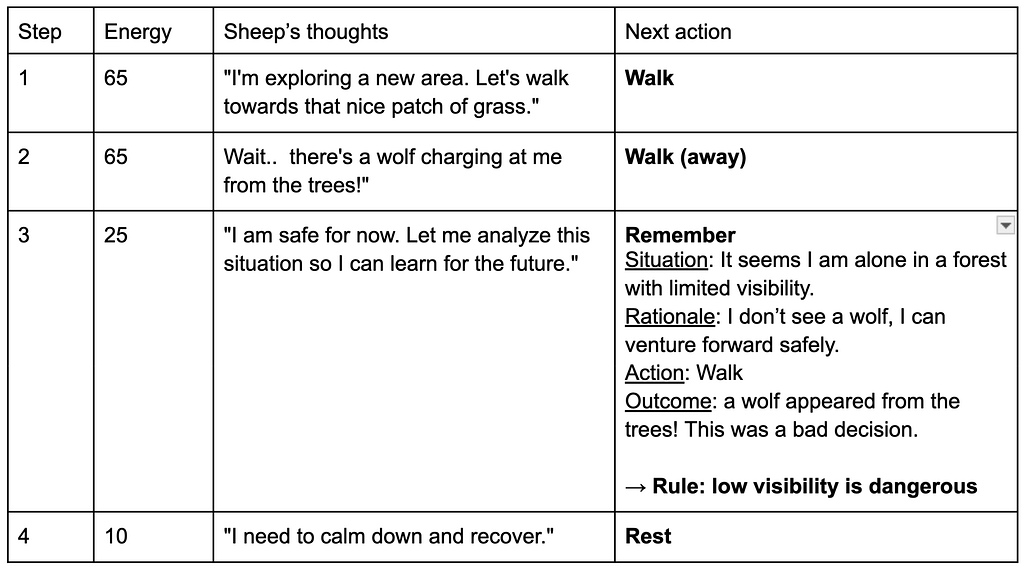

Consider the scenario where the sheep runs into a wolf.

The next day, the sheep can use its memory to avoid the situation.

The context wall

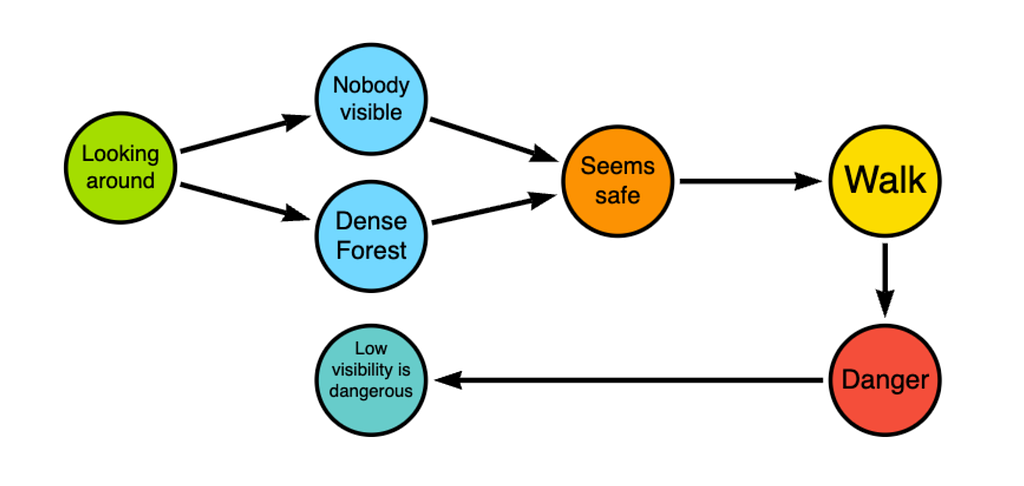

Here’s the catch. Regardless of whether an agent’s memory is a text log, keywords, or sophisticated vector embeddings, level 2 memory eventually hits a wall. The limitation isn’t a lack of data; it’s a lack of explicit relationships.

Back to the sheep. Because its memory is just a collection of disconnected facts, it doesn’t explicitly understand the underlying logic:

- That the trees provided cover…

- … which allowed the wolf to ambush…

- … which turned into a dangerous situation.

The sheep has recorded the history, but it hasn’t learned the rules of the game.

This is why: At level 2, memories are retrieved based on similarity. If our sheep enters a new forest, the memory looks at past events that are mathematically similar. It hands the sheep a stack of vaguely related facts (“I saw a tree,” “I saw a wolf,” “I ate grass”). As the memory bank grows, this creates signal-to-noise degradation. The agent is flooded with disconnected facts and struggles to weigh their relevance.

The context graph

Context Graphs fix this. Instead of isolated memories, a context graph links concepts into a persistent logical structure: decisions, observations, outcomes, all connected to the world model — the forest. This structure enables GraphRAG: the ability to surgically query specific logical paths rather than scanning a massive, noisy diary. If Level 2 is a diary, Level 3 is a mental model.

To build this model, the Context Graph registers every experience with four explicit ideas:

- Situation: What did the environment look like? (Entities, terrain).

- Action: What did I do?

- Rationale: Why did I choose this path? (The hypothesis).

- Outcome: What was the result? (Reward or Penalty).

These concepts then enable explicit reasoning, building mental rules in the sheep’s head.

Level 3 transforms the sheep from a historian into a strategist. It doesn’t just avoid a location because of a bad memory, it learns to avoid low-visibility terrain because it understands the risk. The sheep isn’t just surviving the forest, it’s beginning to understand the fundamental laws of its world.

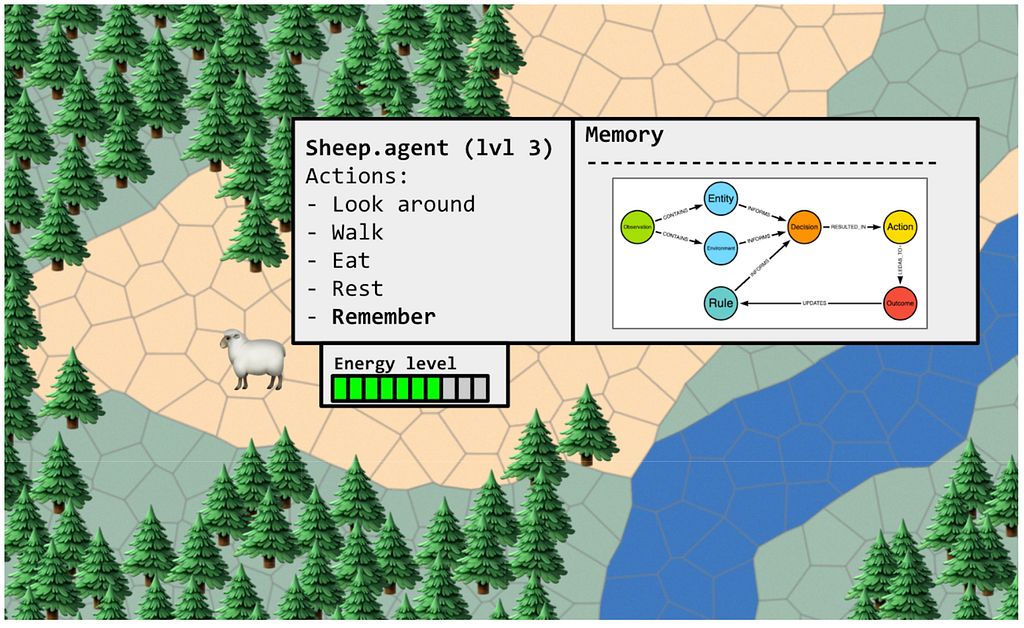

Upgrade to level 3

Back to our sheep. Extending the memory to level 3 is relatively straightforward.

- We extend our memory to explicitly model the situation: actors (animals) & environment (trees, land, water).

- We instruct our agent to explicitly record decisions, actions, outcomes and rules. Here we rely on the power of language models to convert its own ‘thinking logs’ into explicit concepts.

The memory now contains a link between real-world objects and abstract concepts (decisions), a great fit for reasoning. The best part: rules can be learned autonomously. Just like in the real world, a lot of knowledge is implicit. Context graphs provide a vessel for storing this knowledge.

Learning & reasoning

Let’s replay the previous situation with the new contextual memory.

To be clear, the context graph itself doesn’t reason: the LLM does. The graph simply provides the structured, pre-computed scaffolding that allows the LLM to efficiently deduce rules like ‘Areas with low visibility can be dangerous’ without having to reconstruct the timeline from scratch.

Finally, the context graph allows the sheep to apply learnings to new situations.

Reasoning memory in action

Reality check

The story of this sheep is more common than it seems. We equip agents with massive vector databases, endless conversation logs, and semantic search: essentially handing them a highly efficient, very long diary. We then expect them to solve complex problems based on mathematical similarity. But as anyone who has pushed an LLM application to production knows: similarity is not relevance. Most agents are stuck at level 2.

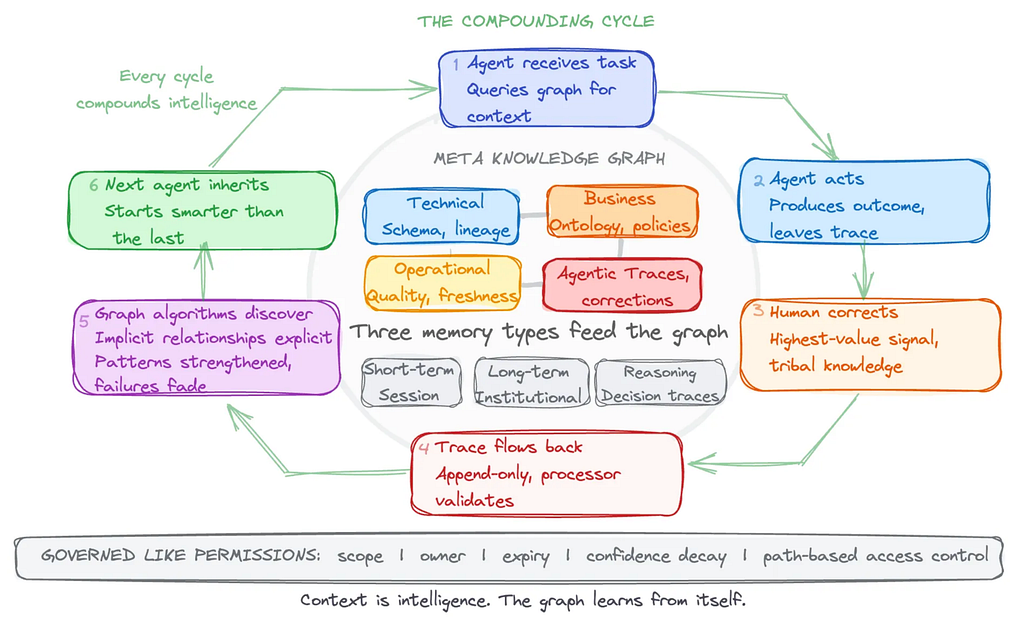

Because a Context Graph encodes the “why” alongside the “what,” it provides that missing relevance. It is the architectural difference between an agent that merely recalls and an agent that actively reasons.

Beyond basic reasoning, Level 3 memory unlocks much more:

- Memory Validation. The real world changes. Wolves adapt, and forests burn down. A Context Graph requires mechanisms for scope, expiry, and review to unlearn outdated information. Without this, your graph becomes a highly structured repository of stale assumptions.

- The Shared Brain (Hive-Mind). In a multi-agent system, the Context Graph acts as shared infrastructure. One agent’s hard-won lesson immediately becomes baseline knowledge for the rest of the fleet.

- The Intelligence Loop. Contextual memory compounds. Agents learn not just from the environment, but from analyzing their own historical reasoning, continuously refining their mental models.

Getting started with context graphs

If you’re looking to build your own context graph, several great use-cases have been published. Open-source frameworks like the neo4j-agent-memory project help you create graph-backed reasoning without too much heavy lifting. And the create-context-graph.dev project can kickstart the creation of an example setup with a domain, memory, reasoning traces and agents with UI to demonstrate the impact.

As a general recipe, you should:

- Isolate a workflow. Pick a single, well-defined agentic task.

- Structure the trace. Instead of logging raw text, force the agent to record its experiences in the four-part structure: Situation, Rationale, Action, Outcome.

- Map the relationships. Connect these structured traces in a graph database rather than a flat vector store.

- Query the graph. Prompt your agent to query the relationships and rules within the graph, rather than executing a standard similarity search.

From recall to reasoning: How context graphs upgrade an agent’s brain was originally published in Neo4j Developer Blog on Medium, where people are continuing the conversation by highlighting and responding to this story.