APRA Just Put the Financial Sector on Notice Over AI. Government Agencies Need to Take Notes.

Senior Account Executive, Federal

3 min read

Late last month, APRA dropped a relatively blunt letter on the desks of banking and insurance executives. The core message was hard to ignore: you are moving fast with AI, but your governance is lagging dangerously behind.

While the letter was aimed squarely at the financial sector, government agencies should be reading it like a crystal ball. If a bank messes up an AI rollout, they face a fine and a PR headache.

If a government department gets it wrong, it compromises citizen privacy, breaks public trust, and impacts critical human services. The stakes are arguably higher, yet the public sector is wrestling with the exact same vulnerabilities APRA called out: leaders who don’t fully grasp the tech, security measures built for yesterday’s software, opaque supply chains, and audits that only look at a single point in time.

If we want to deploy AI safely in government, we have to stop treating governance as a paperwork exercise and start treating it as an engineering problem. Here is what a public sector AI platform needs to look like to actually meet the bar APRA just set.

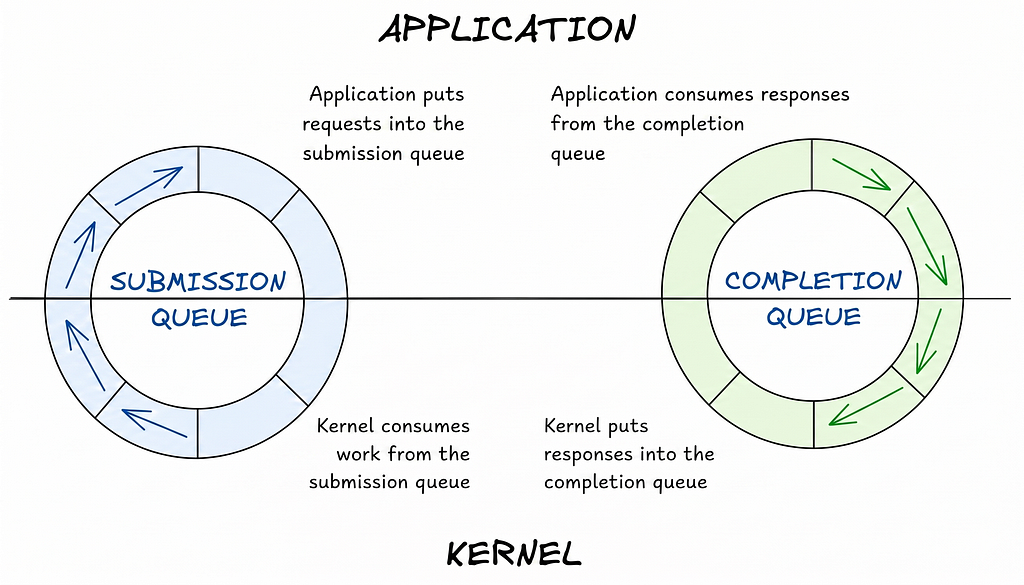

Governance as Code, Not Pdfs The biggest trap agencies fall into is relying on developers to manually fill out risk assessments after an AI tool is already built. This is how “Shadow AI” thrives.

Instead of passive registries, governance needs to be hardwired into the deployment pipeline (your CI/CD). It should act as an automated gatekeeper. If a developer tries to push a new citizen-facing chatbot live, the system should automatically check: Does this touch PII? Has it passed the latest bias checks? If the answer is no, the deployment fails. Period. You govern by default, not by request.

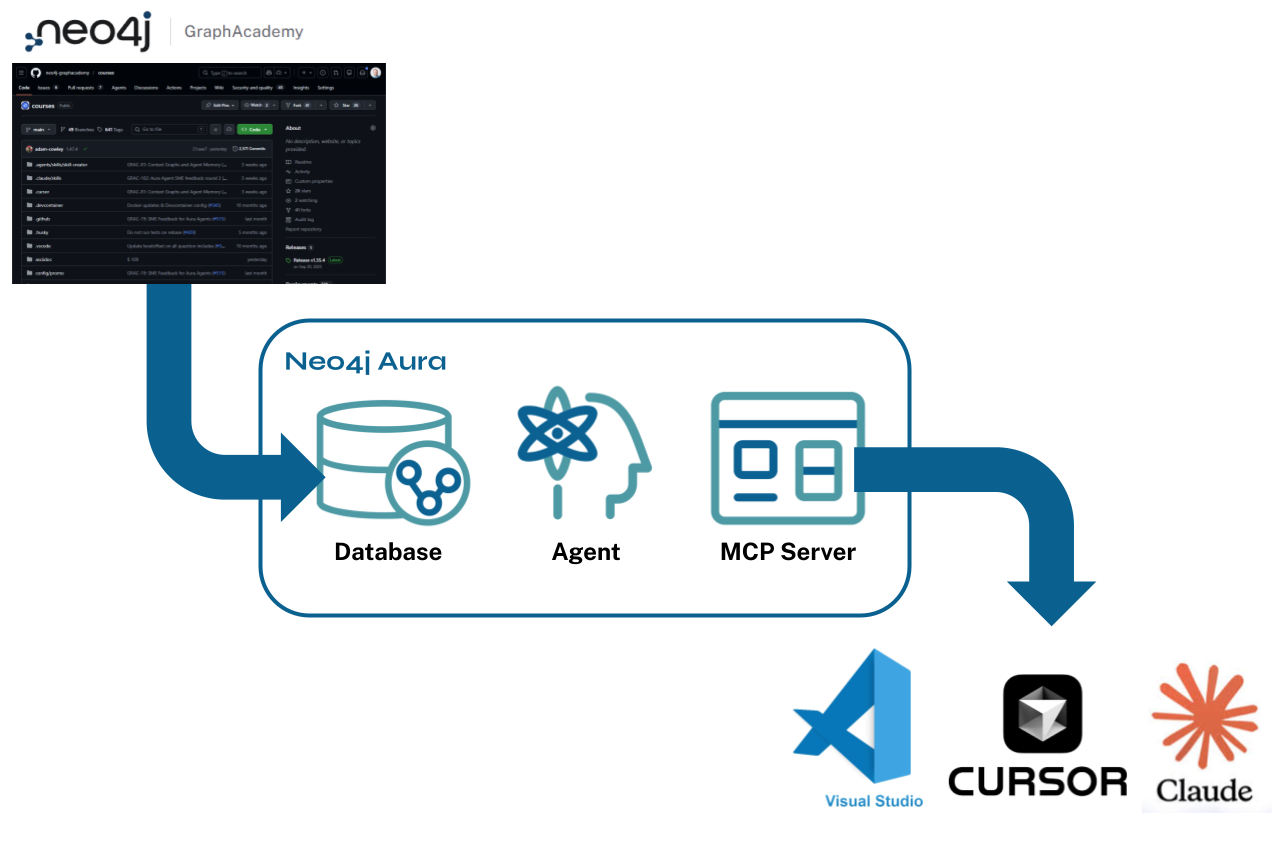

Untangling the AI Supply Chain APRA rightly pointed out that we are becoming incredibly reliant on third-party foundation models. If you’re just using a spreadsheet to track which vendor models are running inside your agency, you’re flying blind.

This is where graph databases (like Neo4j) actually earn their keep. You need a dynamic map that shows the exact lineage of your tools. If an external vendor announces a vulnerability in their language model, you shouldn’t have to scramble to figure out your exposure. Your platform should instantly show you the line from that compromised model straight to the specific public services relying on it, allowing you to trigger fallbacks immediately.

Moving Past “Point-in-Time” Audits Traditional IT systems are relatively static; once they pass security testing, they usually behave. AI doesn’t. Models drift, learn, and degrade. An algorithm that was perfectly unbiased in January might start skewing its outputs by June.

To fix the “fragmented assurance” problem APRA highlighted, government platforms need continuous observability. This means having AI firewalls that actively watch inputs and outputs for things like prompt injection, and monitors that alert operations the second a model starts drifting outside of its approved baseline.

Giving Executives Actual Visibility Finally, we have to talk about leadership literacy. We can’t expect Department Secretaries or Risk Committees to read raw MLOps logs or untangle complex data pipelines. But they are the ones carrying the risk.

A responsible AI platform needs to translate technical telemetry into plain-English operational risk. Stop giving leaders jargon and start giving them clear dashboards that answer simple questions: What percentage of our high-risk systems have passed a recent fairness audit? Where is our greatest vendor concentration risk?

There’s a misconception that heavy governance kills agility. But when you automate the guardrails and give leaders clear visibility, the opposite happens. Developers can ship faster because they know the system won’t let them accidentally break the law, and executives can approve projects because they finally trust the control.