This Week in Neo4j: Neo4j Streams, Event Processing, Py2Neo, News as Knowledge Graphs

Curriculum Developer at Neo4j

2 min read

Hello, everyone!

In this week’s episode, Neo4j Engineering announced the release of Neo4j Streams with many new features. Dirk Fahland describes his project to transform event logs into a knowledge graph and the templates he has published. Rick Van Bruggen writes about how he retrieved new articles and turned them into a knowledge graph. We also point you to one of our NODES 2020 presentations that introduces you to Bloom with a demo by Anurag Tandon. Nigel Small has announced the latest release of Py2Neo. And finally, Madison Gipson shares how to pass parameters into Cypher code using Py2Neo.

Cheers,

Elaine and the Developer Relations team

Featured Community Member: Matthias Sieber

This week’s featured community member is Matthias Sieber.

Matthias Sieber – This Week’s Featured Community Member

Matthias was one of Neo4j’s first Certified Professionals. Not only is he a Neo4j expert, he has helped many users and has earned the coveted @neo4j bronze badge in StackOverflow. We thank him for all that he has done to help the Community.

Matthias has dabbled in a little bit of everything! Besides becoming involved with Neo4j development, he has developed web applications and has provided technical leadership for several key projects. He has also taught courses at the university level. But wait, there is more!

He started a company, Yomi.ai, that helps folks learn Japanese “Yomi.ai stands for better literacy by reading real Japanese”. And, he has also found time to attend law school to earn a degree in dispute resolution. Wow!

Neo4j Streams 4.0.7 has been released

David Allen announced the release of Neo4j Streams 4.0.7. The biggest change is dynamic configuration where you can change your Kafka config on the fly without restarting the database. You can create new databases in Neo4j & subscribe them to new Kafka topics as you go. Neo4j Streams has enabled an extra database field in messages sent to the dead letter queue, so that in multi-database scenarios, you can see where the error came from and make it easier to fix.

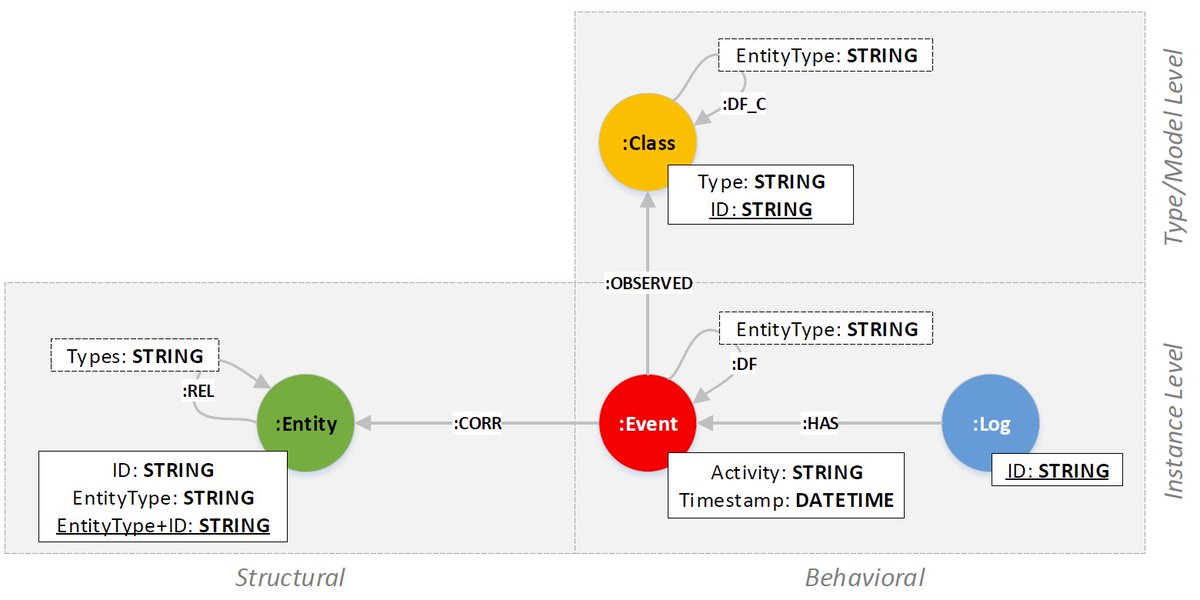

Analyzing process event data with Neo4j

Dirk Fahland has been working on a project to represent events in a knowledge graph. He has encoded time in the knowledge graph so that events can be better analyzed in a workflow. It enables users to see how incidents, interactions, and changes operate on components and configurations. They have published a set of templates that you can use to translate and integrate CSV log files for sets of events.

Making sense of the news with Neo4j, APOC and Google Cloud NLP

Rik Van Bruggen posted an interesting blog about using Neo4j with the APOC library to aggregate data found in news articles as a knowledge graph for an organization. He gathered the data from the EventRegistry site and imported it into the Neo4j database. Then he used the wonderful APOC library and its access to the Google Cloud Platform Natural Language Processing API to enhance the graph to create a true knowledge graph.

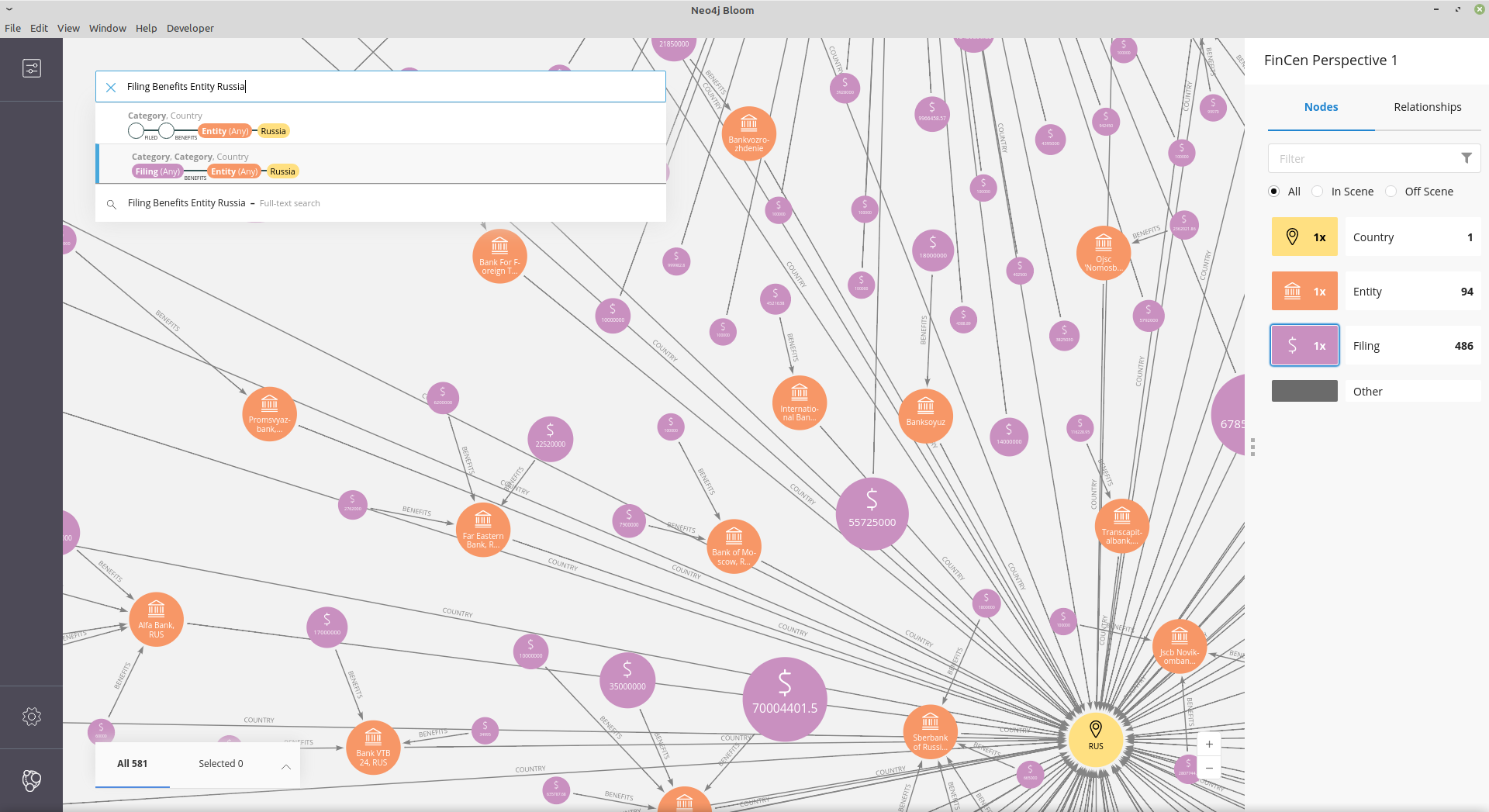

NODES 2020 Video of the Week: Getting Graph Questions Answered with Neo4j Bloom

Here is the session that Anurag Tandon presented about Neo4j Bloom and how you can use it to answer questions about the data in your graph. First, he provides an overview of why you would want to use Bloom and the features of the product. Then he shows you a demo of using Bloom to explore the Northwind dataset and how to create and use perspectives for viewing the data. He also explores other datasets in the demo.

Py2neo 2021.1.0 has just been released

Nigel Small has just announced the latest release of Py2Neo. Some of the highlights of this release include:

- Full support for routing

- Big stability improvements for multithreaded usage

- Graph.update() and Graph.query() methods can retry

- PEP249 (DB API 2.0) compatibility

Read about this release here

Running a Cypher Query With Parameters Through a Python Script

Madison has been using Py2Neo for her projects and found that copying/pasting queries from Neo4j Browser into code is the easiest way to do this. Of course, a best practice is to use placeholders (parameters) in your Cypher queries so that the Execution Plan Cache can be used with no recompilation of queries. In this article, she shows you how to pass parameters for your Cypher code.

Tweet of the Week

My favorite tweet last week was by https://twitter.com/transitive_bs [Travis Fischer^]:

Over the past month, @timsaval and I have been crawling the Clubhouse API with:

2.7M users

15M follows

1.2M invitesAll stored in a neo4j database that’s super easy to explore and play around with visually ?

Check out your own social graph here: https://t.co/AzZDQDDeXE

— Travis Fischer (@transitive_bs) April 21, 2021

Don’t forget to RT if you liked it too!