Exploring the Intersection of Neo4j and Large Language Models

Sr. Staff Software Engineer, Neo4j

3 min read

Insights from Project NaLLM

This is the last blog post of Neo4j’s NaLLM project. We started this project to explore, develop, and showcase practical uses of these LLMs in conjunction with Neo4j. As part of this project, we will construct and publicly display demonstrations in a GitHub repository, providing an open space for our community to observe, learn, and contribute. Additionally, we have been writing about our findings in blog posts. You can see the previous two blog posts here:

- Harnessing LLMs With Neo4j

- Fine-Tuning vs. Retrieval-Augmented Generation

- Multi-Hop Question Answering

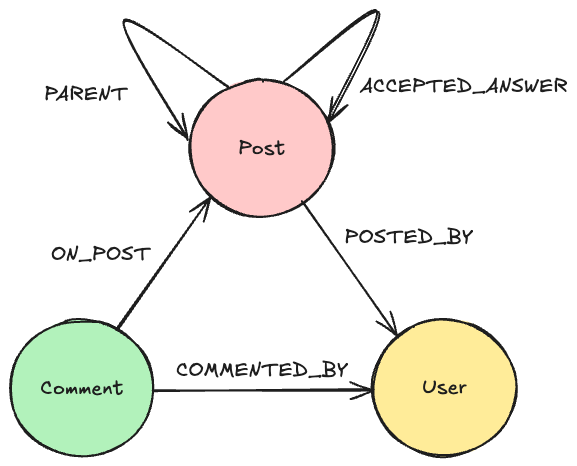

Project NaLLM aimed to understand the interaction between Large Language Models (LLMs) and Neo4j, the leading graph database platform. The focus was on three use cases: creating a natural language interface for a knowledge graph, forming a knowledge graph from unstructured data, and a KG and LLM backed application where the LLM isn’t at the center.

LLMs: Positive Aspects

One of the project’s significant findings was the strong ability of LLMs in generating text. These models can create text that closely resembles human writing. This is possible because they are extensively trained on a wide array of written data. They can predict the next word in a sentence and form sentences that fit well within the given context. This impressive text generation ability is on par with, and sometimes even better than, a human writer’s work. Therefore, LLMs show great potential in improving user experiences.

LLMs also have a good ability to generate code and query languages like Cypher (the query language for graph databases). While there are some improvements desired in this space, it’s remarkable that LLMs can find the answer to a user question themselves by generating a database query to extract the answer from a graph database.

Challenges Encountered

However, LLMs also have certain limitations. For instance, while they’re good at generating text, they often struggle to understand the deeper meaning of what they’re writing. This becomes problematic when extracting data from knowledge graphs, which require precise Cypher queries.

We also found that LLMs can identify and classify entities in large datasets but are not reliable when it comes to autonomously populating a knowledge graph. This means that human input or a more predictable pipeline is still necessary to confirm or adjust the classifications suggested by LLMs.

Another key finding was the importance of “prompt engineering.” This is the process of designing specific input prompts to guide the LLM to produce the desired output. It’s a crucial factor in effectively using LLMs.

Looking Forward

Despite these challenges, Project NaLLM’s findings offer valuable insights into the potential combination of LLMs and Neo4j. These insights will form a strong basis for future research aimed at further developing and refining the technology. There is a lot of potential in the intersection of LLMs and Neo4j, and we’re eager to see what advancements the future will bring.

/ The NaLLM Project Core Team at Neo4j: Oskar Hane, Tomaz Bratanic, Noah Mayerhofer, Jon Harris

Exploring the Intersection of Neo4j and Large Language Models was originally published in Neo4j Developer Blog on Medium, where people are continuing the conversation by highlighting and responding to this story.