NeoDash 2.3: Create Graph Dashboards With LLM-Powered Natural Language Queries

Product Manager, Neo4j

5 min read

We’re excited to announce that NeoDash 2.3 is finally here! This update brings a fresh new look, improved performance for handling large dashboards, and an array of exciting visualization features. You can check it out for yourself at https://neodash.graphapp.io/.

Beyond improvements on the core engine, we are also developing new extensions to improve the dashboard building experience. This time, we zoom in on a natural language interface for Neo4j, a new extension that lets you translate English to Cypher on the fly.

Natural Language To Cypher

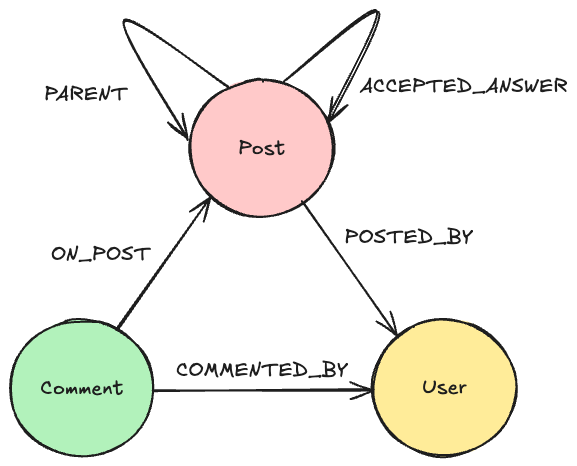

With NeoDash, anyone that knows Cypher should be able to create an interactive Neo4j dashboard in minutes. While Cypher is not difficult to learn (check out the Neo4j GraphAcademy), it still provides a hurdle for new users. Even for Cypher experts, automatically creating a query based on a business question sounds like a dream. This is where Generative AI comes in.

Beyond the hype, the rise of Generative AI has led to an amazing amount of applications for large language models. One thing that LLMs are inherently great at is translating. As it turns out, translating isn’t just limited to human language (English to Spanish) but also works for computer languages — like Python or SQL, but most importantly, Cypher.

Over the past months, there has been an astonishing amount of research done around graphs and generative AI. APOC is adding support for integration with major model providers, and there is great work done on creating synthetic graph data with LLMs. On the text-to-Cypher front, the team of Tomaz Bratanic has made giant leaps forward in prompt engineering techniques to create the best possible queries for a given Neo4j database.

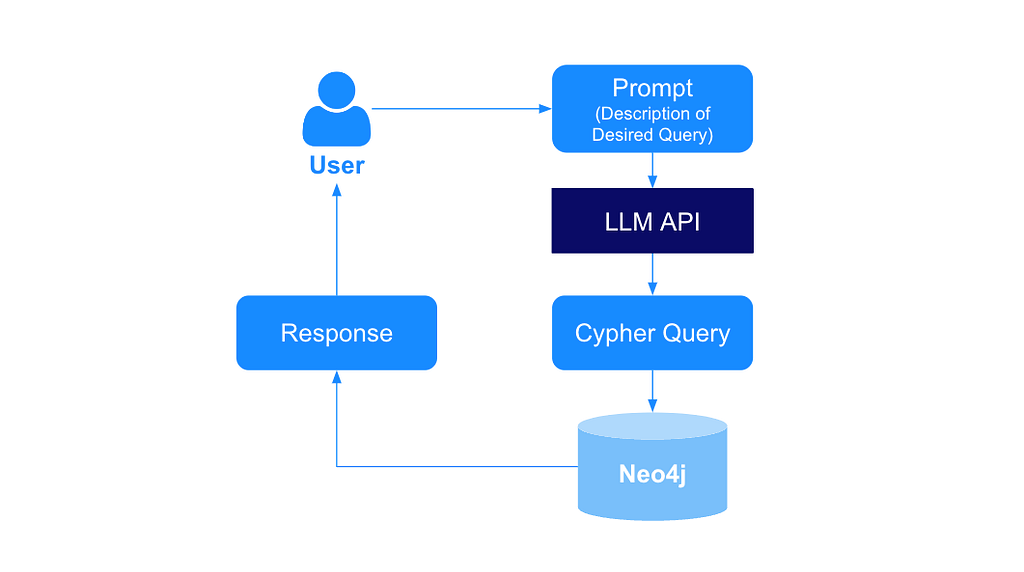

The latest version of NeoDash contains one of the very first end-to-end Natural Language to Cypher interfaces — combining the power of Cypher and OpenAI’s models to improve the speed at which you build dashboards.

Next, we show you how to set up the extension yourself and get started with natural language queries in NeoDash.

Configure the Extension

Before getting started, you will need:

- A Neo4j database. For example, a Neo4j Sandbox.

- An OpenAI account. You will get $5 in free credits after creating a new account, or you can link a credit card.

To get started with natural language queries in NeoDash, follow these steps:

- Create an OpenAI key at https://platform.openai.com/account/api-keys. OpenAI is the only provider integrated with NeoDash at the moment, support for additional model providers (i.e., VertexAI) will be added later.

- Open NeoDash and connect to your database. If you do not have a Neo4j database, you can create one for free here.

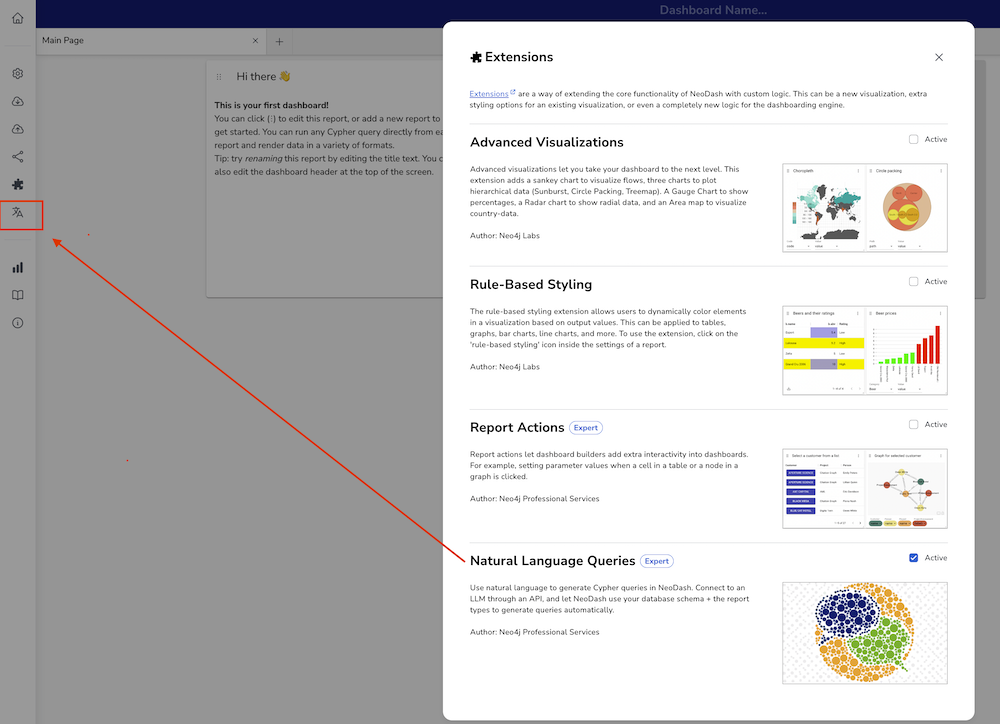

- Activate the extension. First, click the puzzle piece icon in the sidebar to open up the extensions screen. Scroll down and enable the extension called “Natural Language Queries” by ticking the box. After enabling the extension, a new icon will appear in the sidebar (see image below). Close the extension screen, and click the “Translation” icon.

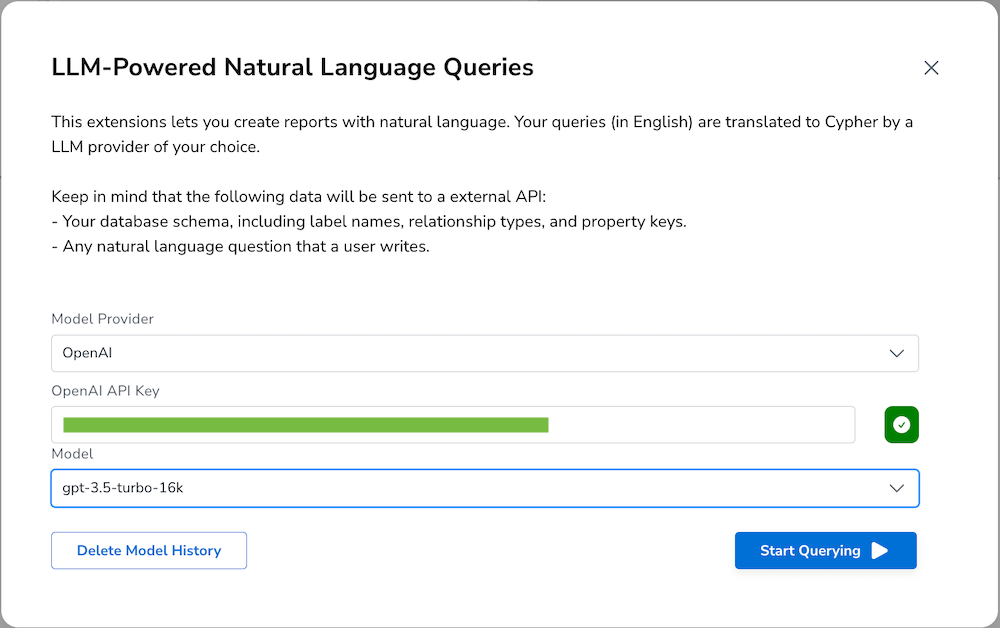

4. Configure the extension to use your key. First, select a provider (OpenAI) and enter your key in the empty textbox. Then click the orange button to test the connection. When the key is valid, the button should turn green, and you can select a model:

Select a model to use. We recommend either gpt-4–0613 or gpt-3.5-turbo-16k. Click the “Start Querying” button to save the configuration.

Querying

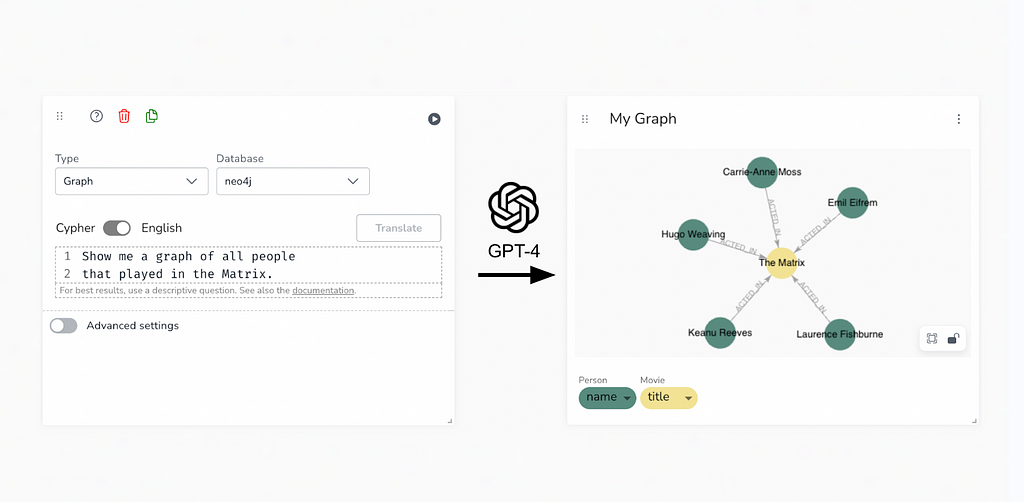

After the extension is set up and configured, we are ready to start writing natural language queries. To do so, create a new report, and open up the settings. You will see a toggle “Cypher / English”, which specifies the input type. After toggling to English, write your question, and hit the translate button. If all goes well, your Cypher query should be ready in a couple of seconds:

Under the hood, NeoDash takes care of schema retrieval, retry logic, prompt engineering, Cypher validation, and more. If you’re interested in the detailed logic — check out the documentation.

For best results, keep the following in mind:

- Choose a visualization first. NeoDash tries to create a query according to the current type of visualization selected. If you’re writing a question and the current type is “Bar Chart,” it will try to fit the results accordingly.

- Call out node labels and relationship types. NeoDash sends your database schema to OpenAI as part of the prompt, so it knows the entities in the graph. It is better to refer to “People” (Person nodes) rather than actors!

- Be precise about values. If you are filtering by property value, i.e., a movie called “The Matrix,” use the exact spelling of the property’s value. Except for your graph schema, the only thing submitted to OpenAI is the question you write — no data values are implicitly retrieved.

- Be specific about what you want. Ask what you’d like the LLM to do. “Aggregate by X” or “Find the path between X and Y” are great ways to steer the model into producing the best query for your use case. An example of a more specific instruction on a one-shot query is below:

Unable to translate a query? This could be because:

- Your query is too complex. NeoDash validates that OpenAI is returning a valid Cypher to you before saving it. The larger the query, the higher the probability of mistakes. Try simple queries first.

- Your OpenAI quota is reached, and you will need to add more credits. (‘Error 429’)

- Your model is hallucinating! Sometimes, you just need to try again. LLMs tend to be non-deterministic and perform differently each time. As models improve and become more specific, this should get better eventually.

Wrapping up

Through intelligent prompt engineering, reporting on graph data is more accessible now than ever before. As the world of generative AI continues to evolve, the technique of text-to-Cypher translation will continue to get better and eventually become a great companion to dashboard builders. Realistically —these tools should get your query almost there, and you will do the final bit of tweaking on your own.

For more on NeoDash 2.3, check out the labs pages and the official GitHub repository. Happy dashboarding!

NeoDash 2.3 — Create graph dashboards with LLM-powered natural language queries was originally published in Neo4j Developer Blog on Medium, where people are continuing the conversation by highlighting and responding to this story.