A knowledge layer for your agentic systems on Google Cloud

Vice President of Product Marketing, Neo4j

4 min read

If you’re building agentic systems, you already know the pattern. Your agents start strong on simple tasks, then fall apart as complexity scales. They lose context mid-conversation. They repeat work they’ve already done. And they can’t explain why they made a given decision. The missing piece isn’t a better model. It’s a better memory.

Today, we’re announcing a set of capabilities that bring contextual decision-making, accuracy, and full traceability to your agentic AI systems on Google Cloud.

What we’re launching

This release spans three areas that work together to give your agents a persistent knowledge layer.

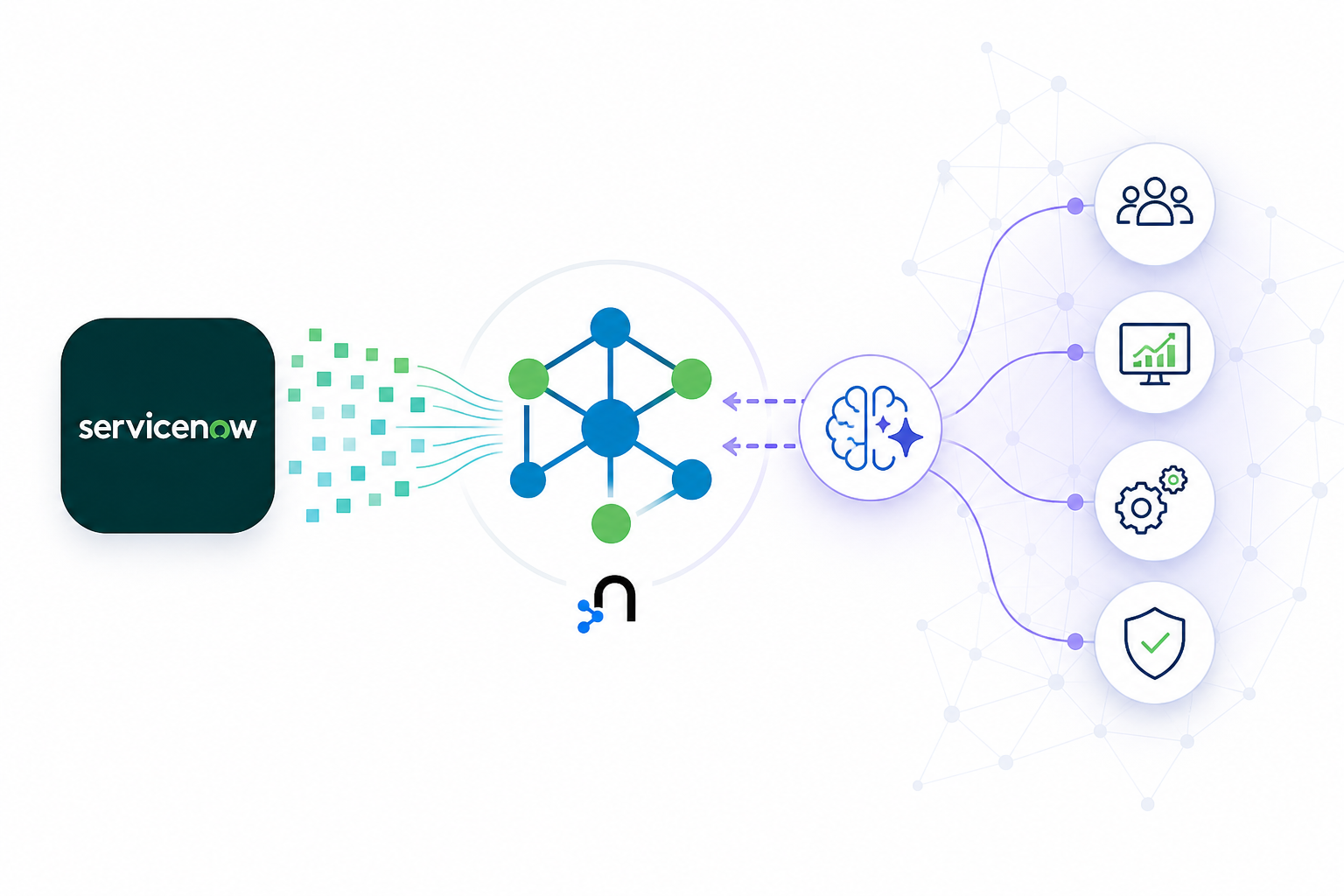

Deep integration with Google Gemini Enterprise and the Agent Development Kit (ADK). Neo4j now plugs directly into your Gemini-Enterprise powered workflows with reference architectures and guides purpose-built for the Google Cloud agent platform. Whether you’re running agents on Vertex AI with Agent Engine or building with the ADK, Neo4j integrates natively via an official Model Context Protocol (MCP) server.

We’re also integrating Neo4j Agents with GraphRAG capabilities via Agent-to-Agent (A2A) protocol with Gemini Enterprise so that you can install them directly via their agent-cards.

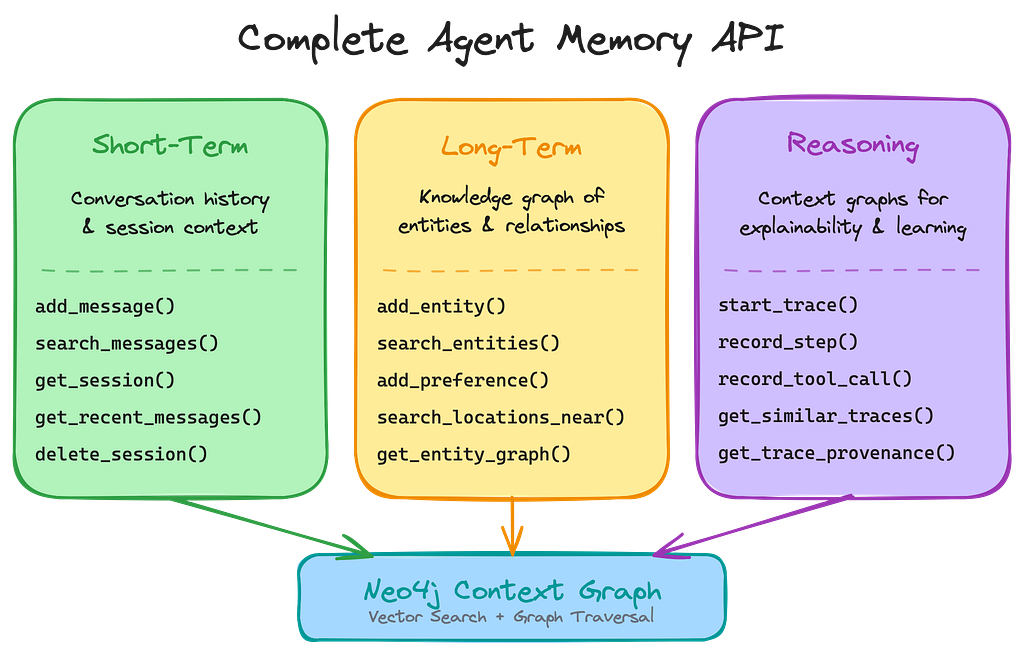

A complete agent memory API. The neo4j-agent-memory package, available in Python, JavaScript, Go, gives your agents short-term, structured long-term, and reasoning memory (e.g., agent traces, tool calls) directly integrated in ADK-Agents and available for the Gemini Enterprise. That includes decision traces and context graphs. Your agents can now automatically store what they’ve done, learn from it, and retrieve it when it matters. Memory also consolidates and distills as part of the process.

Agentic GraphRAG through MCP and Aura. Our Aura agents are built on Gemini as the reasoning engine. You can now integrate your own multi-agent systems with graph-grounded retrieval through the MCP endpoints that Aura Agents offer, with no extra framework required.

Why this matters for Google Cloud teams

If you’re already running on Google Cloud, these integrations were built for your stack. Neo4j’s agentic capabilities connect to ADK through Google’s MCP integration. That means your agents, your Vertex AI workflows, and your ADK projects get a knowledge layer that was developed and tested against the same models and infrastructure you’re already using. No custom integrations necessary. Just tools that work the way your Google Cloud environment expects them to.

Here’s what that means in practice.

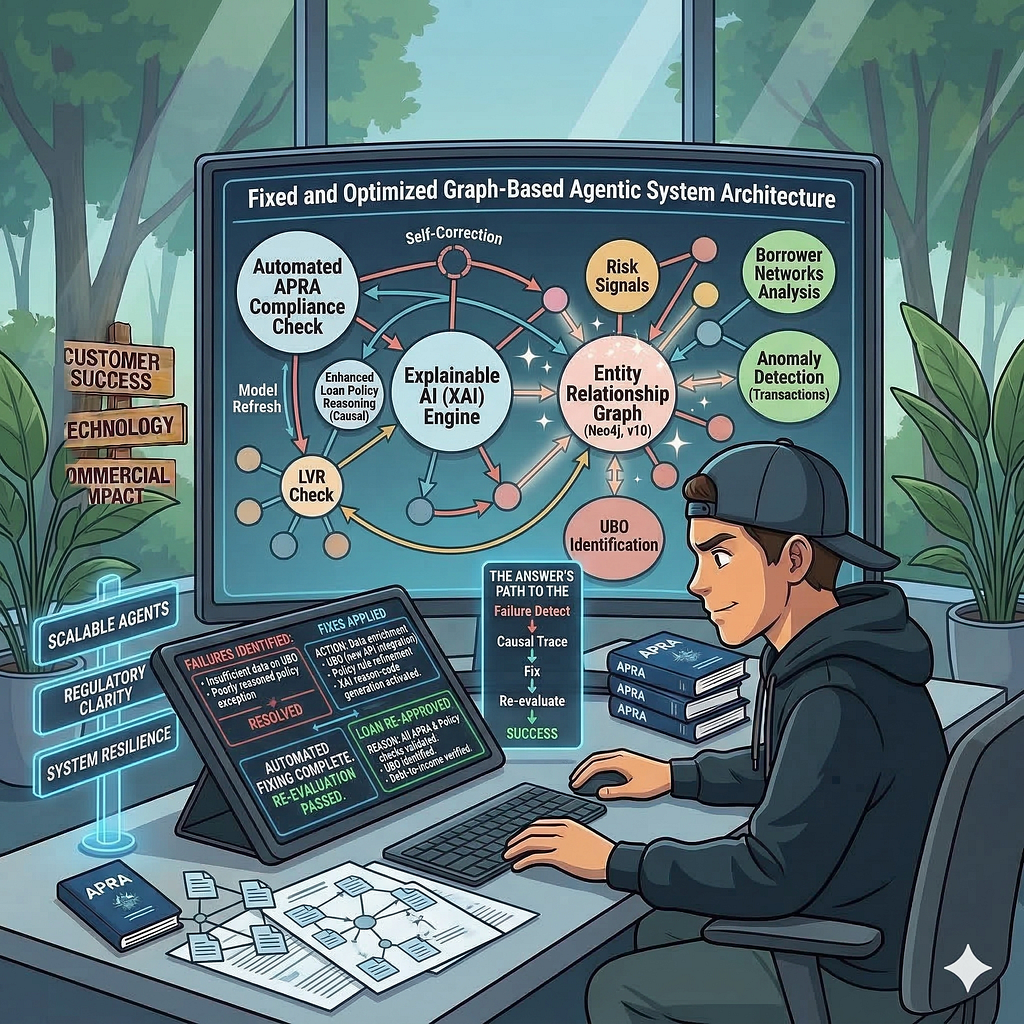

Your agents make better decisions. Ground them in successful decision traces stored in a graph, and they don’t start from scratch every time. They find and reuse similar distilled reasoning paths. That cuts loops, reduces latency, and lowers token usage.

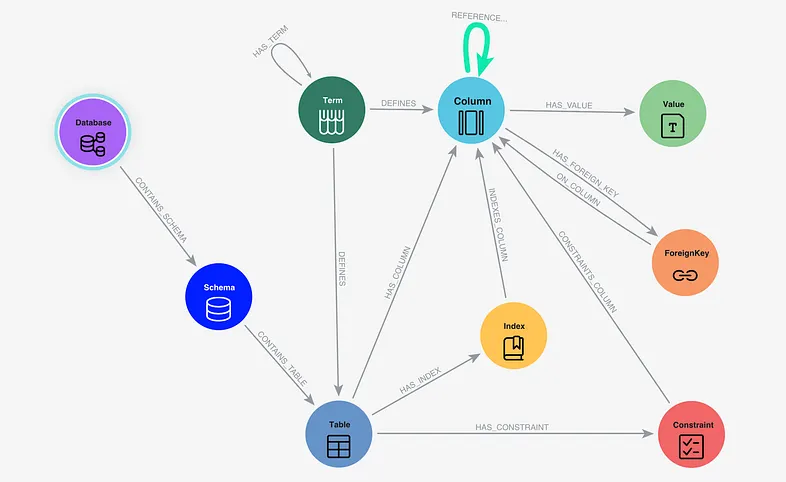

Your retrieval gets more accurate. A knowledge layer maps and resolves entity semantics from mixed sources. Your agents, empowered with GraphRAG retrieval, aren’t just doing vector similarity searches. They’re traversing real relationships in your data. That’s the difference between finding a document fragment and understanding its context. Retrieved contextual subgraphs explain the why and how of completed tasks or generated answers.

You can trace every decision. Reasoning, tool invocations, parameters, results, causal chains. It’s all persisted in the graph and can be analyzed, smartly compressed, and consolidated with graph algorithms. When something goes wrong (or right), you can trace exactly what happened and why. For teams in finance, healthcare, or legal, that’s not optional. It’s a requirement.

How it works with your Google Cloud stack

Neo4j can be surfaced in Gemini Enterprise workflows through Neo4j Agents that speak the A2A protocol. Give your Gemini Enterprise end user workflows and interactions graph-grounded retrieval at scale. You get GraphRAG (vector/hybrid search with graph traversal), and natural language is translated directly into Cypher graph queries.

If you’re using the ADK, the Neo4j MCP server registers as a set of MCP tools for retrieval within your existing Vertex AI-hosted A2A agents and services. Same capabilities, higher-level protocol.

And with AuraDB on Google Cloud, you get a fully managed graph database that powers your context graphs, agent memory, and graph-grounded retrieval. No infrastructure to manage.

Get started

If you’re building on Google Cloud, you can start adding a knowledge layer to your agents right now.

- Already running Vertex AI workflows? Use Neo4j in ADK agents through MCP and give your agents graph-grounded retrieval in minutes.

- Want to add memory to your Gemini-powered agents? Install the

neo4j-agent-memorypackage and start persisting the three types of agent memory across sessions. - Exploring the ADK or building something new? Check out the Google Cloud integration guides at neo4j-labs/neo4j-agent-integrations for reference architectures and working examples.

- Read the docs to learn more about ADK and the Google Gemini Enterprise integration

If you’re looking to add a knowledge layer to your Google Cloud stack without rearchitecting everything, try these out and let us know what you build.