System Requirements

Main Memory

The GDS library runs within a Neo4j instance and is therefore subject to the general Neo4j memory configuration.

Heap size

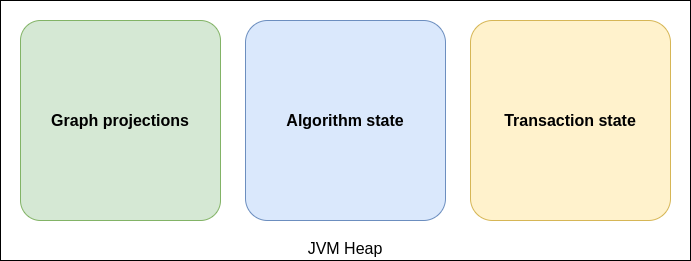

The heap space is used for storing graph projections in the graph catalog, and algorithm state.

When writing algorithm results back to Neo4j, heap space is also used for handling transaction state (see dbms.tx_state.memory_allocation). For purely analytical workloads, a general recommendation is to set the heap space to about 90% of the available main memory. This can be done via server.memory.heap.initial_size and server.memory.heap.max_size.

When writing algorithm results back to Neo4j, heap space is also used for handling transaction state (see dbms.tx_state.memory_allocation). For purely analytical workloads, a general recommendation is to set the heap space to about 90% of the available main memory. This can be done via dbms.memory.heap.initial_size and dbms.memory.heap.max_size.

To better estimate the heap space required to project graphs and run algorithms, consider the Memory Estimation feature. The feature estimates the memory consumption of all involved data structures using information about number of nodes and relationships from the Neo4j count store.

Page cache

The page cache is used to cache the Neo4j data and will help to avoid costly disk access.

For purely analytical workloads including native projections, it is recommended to decrease the configured PageCache in favor of an increased heap size.

To configure the PageCache size you can use the following Neo4j configuration property server.memory.pagecache.size

To configure the PageCache size you can use the following Neo4j configuration property dbms.memory.pagecache.size

However, setting a minimum page cache size is still important when projecting graphs:

-

For native projections, the minimum page cache size for projecting a graph can be roughly estimated by

8KB * 100 * readConcurrency. -

For Cypher projections, a higher page cache is required depending on the query complexity.

-

For projections through Apache Arrow, the page cache is irrelevant.

-

For Legacy Cypher projections, a higher page cache is required depending on the query complexity.

However, if it is required to write algorithm results back to Neo4j, the write performance is highly depended on store fragmentation as well as the number of properties and relationships to write.

We recommend starting with a page cache size of roughly 250MB * writeConcurrency and evaluate write performance and adapt accordingly.

Ideally, if the memory estimation feature has been used to find a good heap size, the remaining memory can be used for page cache and OS.

|

Decreasing the page cache size in favor of heap size is not recommended if the Neo4j instance runs both, operational and analytical workloads at the same time. See Neo4j memory configuration for general information about page cache sizing. |

Native memory

Native memory is used by the Apache Arrow server to store received data.

If you have the Apache Arrow server enabled, we also recommend to reserve some native memory. The amount of memory required depends on the batch size used by the client. Data received through Arrow is temporarily stored in direct memory before being converted and loaded into an on-heap graph.

CPU

The library uses multiple CPU cores for graph projections, algorithm computation, and results writing. Configuring the workloads to make best use of the available CPU cores in your system is important to achieve maximum performance. The concurrency used for the stages of projection, computation and writing is configured per algorithm execution, see Common Configuration parameters

The default concurrency used for most operations in the Graph Data Science library is 4.

The maximum concurrency that can be used is limited depending on the license under which the library is being used:

-

Neo4j Graph Data Science Library - Community Edition (GDS CE)

-

The maximum concurrency in the library is limited to 4.

-

-

Neo4j Graph Data Science Library - Enterprise Edition (GDS EE)

-

The maximum concurrency in the library is unlimited. To register for a license, please visit neo4j.com.

-

| Concurrency limits are determined based on whether you have a GDS EE license, or if you are using GDS CE. The maximum concurrency limit in the graph data science library is not set based on your edition of the Neo4j database. |